TL;DR

The average SaaS activation rate is 36%. The best product-led growth companies run at 40–50%. If you don't know where your product sits in that range, you can't know whether your onboarding investments are closing a real gap or optimizing something that was never the constraint. This article covers what product adoption benchmarking analysis is and why it produces different answers than internal measurement alone; the five adoption metrics worth benchmarking (activation rate, time-to-value, feature adoption depth, tour completion rate, and 30-day cohort retention); concrete industry benchmarks for each, drawn from a study of 500+ SaaS products and Jimo's analysis of 1,025 product tours; a five-step process for running a benchmarking analysis against your own data; and how to turn the gaps it reveals into a prioritized action list rather than just a score.

You've rebuilt the onboarding flow twice. You added a checklist, then replaced it with a guided tour, then added the checklist back. Activation moved 3 points in one quarter and gave back 2 the next. Leadership wants to know if the investment is working. You don't have a clean answer, because you don't actually know if 34% activation is a crisis or a reasonable starting point for your category.

That's the problem product adoption benchmarking analysis solves — and it's a different problem from measurement.

Most product teams have data. They track activation rates, time-to-value, feature adoption numbers. What they don't have is an external reference point for any of it. So they end up in a familiar loop: picking targets based on gut feel, running interventions against those targets, reporting directional progress to leadership, and never quite being able to answer whether the numbers are good. Whether the gap is worth the resources being put into it. Whether they're competing at the level the market requires or quietly underperforming against a standard no one on the team has looked up.

The average SaaS activation rate is 36%, with a median of 30%, based on a benchmarking study of over 500 products by Lenny Rachitsky and Yuriy Timen. The best product-led growth companies run at 40–50%. That's a 20-point spread between median and best-in-class — wide enough that your position within it likely has a direct line to trial-to-paid conversion, 90-day retention, and expansion revenue.

Knowing where you sit in that range changes the conversation. A 34% activation rate looks like a crisis if your category median is 45%. It looks like a defensible starting point if most comparable products are stuck at 25%. It tells you whether to treat activation as your primary growth constraint or focus resources somewhere else entirely. Without that frame, you're making prioritization decisions based on internal trend lines that tell you what changed but not whether it matters.

This guide gives you the benchmarks, a process for applying them to your own data, and a framework for converting the gaps they reveal into a prioritized action list. If you're not yet tracking these metrics inside your product, start with our guide to how to measure product adoption first. If you already know your gaps and are looking for the interventions to close them, the guide to how to increase product adoption picks up where this one ends.

What is product adoption benchmarking analysis?

Product adoption benchmarking analysis is the practice of measuring your SaaS product's behavioral metrics. This includes activation rate, time-to-value, feature adoption depth and setting them against what other products in your category actually achieve, and using that comparison to decide where to focus.

It's not the same as measuring product adoption. Measuring tells you what's happening inside your product whereas benchmarking tells you whether what's happening is good enough. Those are different questions, and they produce different answers.

It's also not the same as traditional business benchmarking, which compares things like revenue per employee or cost structures across companies. Product adoption benchmarking is narrower and more actionable: it focuses specifically on the behavioral signals that predict whether users activate, retain, and expand — and whether yours are competitive.

The output isn't a score or a ranking. It's a prioritized answer to the question every product team eventually gets asked and rarely has data to answer cleanly: are we closing the right gaps, or just busy?

Why benchmarking your product adoption metrics actually matters (a lot)

Here's a number worth sitting with: 70% of SaaS users who churn do so in the first 30 days — not because your product failed them, but because they never got far enough in to find out if it would (Wyzowl). They signed up, clicked around, hit a moment of confusion or friction, and quietly left. No feedback. No cancellation email. Just gone.

That's an adoption problem. And the brutal part is that most teams don't know they have it until retention data confirms it weeks after the damage is done.

This is exactly what makes benchmarking so useful, and so underused. Without an external reference point, there's no way to know whether a 34% activation rate represents a crisis or a reasonable baseline for your category. So product teams do what any reasonable team would do: they set internal targets based on past performance, declare wins when they hit them, and move on. The dashboard stays green. Churn stays flat.

The numbers tell a different story. A 25% improvement in activation rate correlates with a 34% increase in revenue (FairMarkit). That's not a marginal efficiency gain. That's a growth lever sitting inside a metric most teams are actively misreading because they have no benchmark to read it against.

Consider what's actually at stake across each metric:

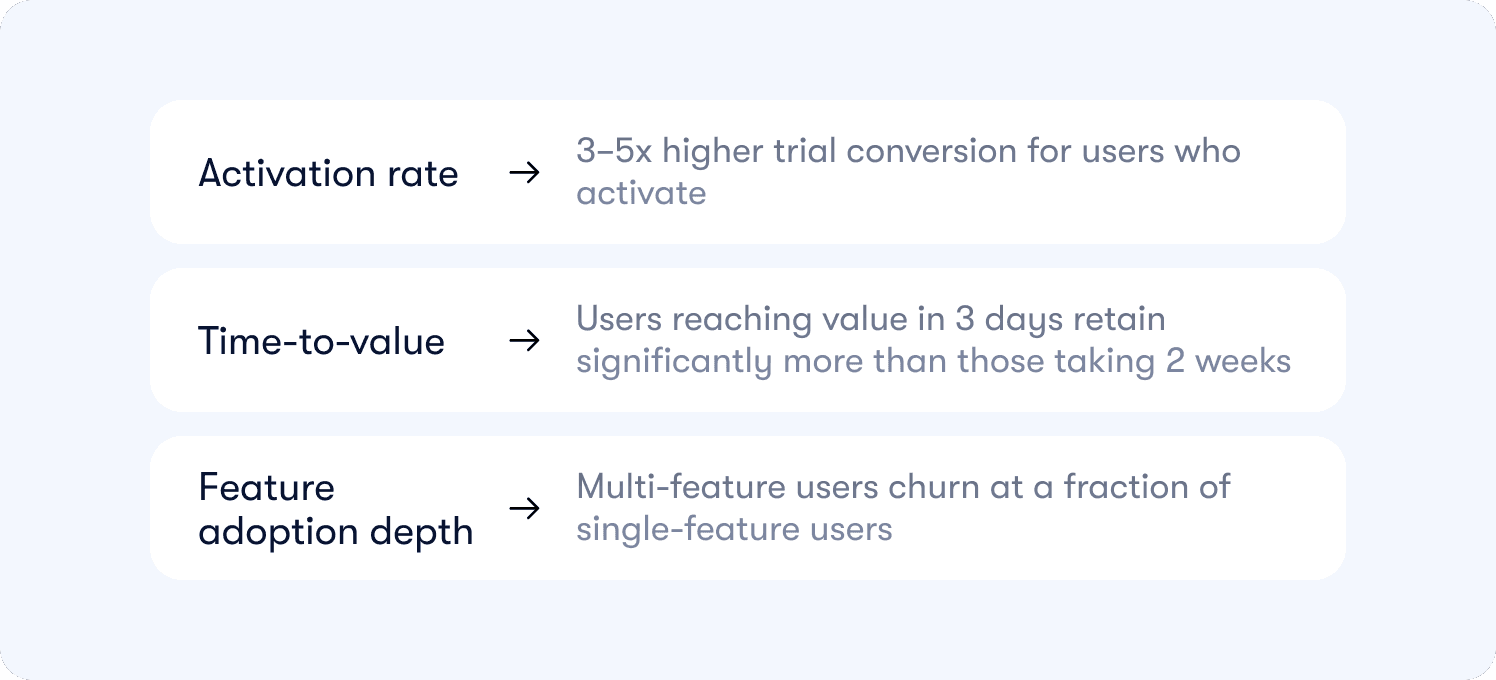

Activation rate is the first domino. Users who activate convert to paid at 3–5x the rate of those who don't. If your activation rate is sitting at 28% when your category median is 36%, you're not underperforming by a few percentage points. You're converting at a fraction of what the market baseline produces — and every point below that median is acquisition spend that never pays back.

Time-to-value is where most of the bleeding happens quietly. Industry benchmarks suggest users who reach first value within three days retain at significantly higher rates than those who take two weeks. If your free trial is 14 days and your median time-to-value is 11 days, most users are making a purchase decision before they've experienced the thing your product was built to do. That's not an onboarding problem. That's a revenue architecture problem.

Feature adoption depth is the difference between a user who stays and a user who expands. Single-feature users churn when a competitor offers that one capability cheaper, which they always eventually do. Users who adopt three or more core features develop product dependency that pricing pressure can't easily disrupt. Knowing where your feature adoption depth sits relative to product adoption metrics that predict retention turns what looks like an engagement question into a retention and expansion strategy.

Benchmarking doesn't change any of these numbers on its own. What it changes is whether you know which number is your actual constraint and whether the resources going into fixing it are proportional to what fixing it is worth.

The 5 product adoption metrics worth benchmarking (and what good actually looks like)

Before you can benchmark anything, you need to be measuring the right things. Not logins. Not session counts. Not the green arrows on your weekly dashboard that feel like progress and predict nothing.

The five metrics below are the ones that correlate with whether users stay, convert, and expand. Each one comes with the industry benchmark so you can immediately see where your product stands.

1. Activation rate

The percentage of new users who reach your defined "aha moment" action within 7 days of signing up.

Why it matters: Users who activate convert to paid at 3–5x the rate of those who don't. Everything downstream — retention, expansion, revenue — is amplified or choked off here first.

Industry benchmarks (Lenny Rachitsky & Yuriy Timen, 500+ SaaS products):

Bottom quartile: below 25%

Industry median: 30%

Industry average: 36%

Top-performing PLG companies: 40–50%

The diagnostic: If your activation rate is below 30%, you're losing the majority of every cohort before they've seen what your product can do. That's not a retention problem. It starts here.

2. Time-to-value (TTV)

The median number of days between a user's first login and their first activation event.

Why it matters: Users who reach first value within 3 days retain at significantly higher rates than those who take two weeks. Most free trials run 14 days. Do the math on what a 10-day TTV actually means for your conversion window.

Industry benchmarks:

Strong: first value within 1–3 days of signup

Acceptable: within 7 days

At-risk: beyond 7 days in a 14-day trial

The diagnostic: TTV is where friction hides. It doesn't look like a problem until you realize most users are making their purchase decision before they've experienced the thing your product was built to do.

3. Feature adoption depth

The percentage of active users engaging with three or more core features within their first 30 days.

Why it matters: Single-feature users are price-sensitive and easy to churn. Multi-feature users build product dependency. Each additional feature they adopt deepens the switching cost and broadens the value they associate with your product.

Industry benchmarks:

Strong: 40%+ of active users adopting 3+ core features within 30 days

Average: 20–35%

At-risk: below 20% — users are treating your product as a point solution, not a platform

The diagnostic: Low feature adoption depth is often invisible until a cheaper competitor appears. By then it's too late to rebuild the dependency.

4. Tour and checklist completion rate

The percentage of users who complete your primary onboarding tour or checklist.

Why it matters: It's the most-tracked metric in onboarding and, on its own, the least useful. A high completion rate with a flat activation rate means users clicked through your tour and learned nothing that changed their behavior. Completion only matters when it predicts activation — which is why it belongs here alongside the metrics that do.

Industry benchmarks (Jimo analysis of 1,025 product tours):

3-step tours: ~72% average completion

Standard tours (all lengths): average 27%, median 15%

AI-powered tours: ~44% completion — roughly 2x standard

Below 50% on a short tour: friction or irrelevance signal worth investigating

The diagnostic: If your tour completion is strong but activation isn't moving, your tour is completing on the wrong actions. Users are clicking Next, not doing the thing.

5. 30-day cohort retention

The percentage of users still active and performing meaningful actions 30 days after their first login.

Why it matters: This is the scoreboard. Every other metric in this list is a leading indicator that predicts where this number is going. If your 30-day retention is declining, the problem started upstream — in activation, TTV, or feature adoption — weeks before it shows up here.

Industry benchmarks:

Strong for B2B SaaS: 40%+ at day 30

Average: 25–35%

Below 20%: churn is structural, not seasonal

The diagnostic: 30-day retention is a lagging signal, which means by the time it moves, a cohort is already gone. Track it to validate that your other interventions are working — not as the metric you optimize week to week.

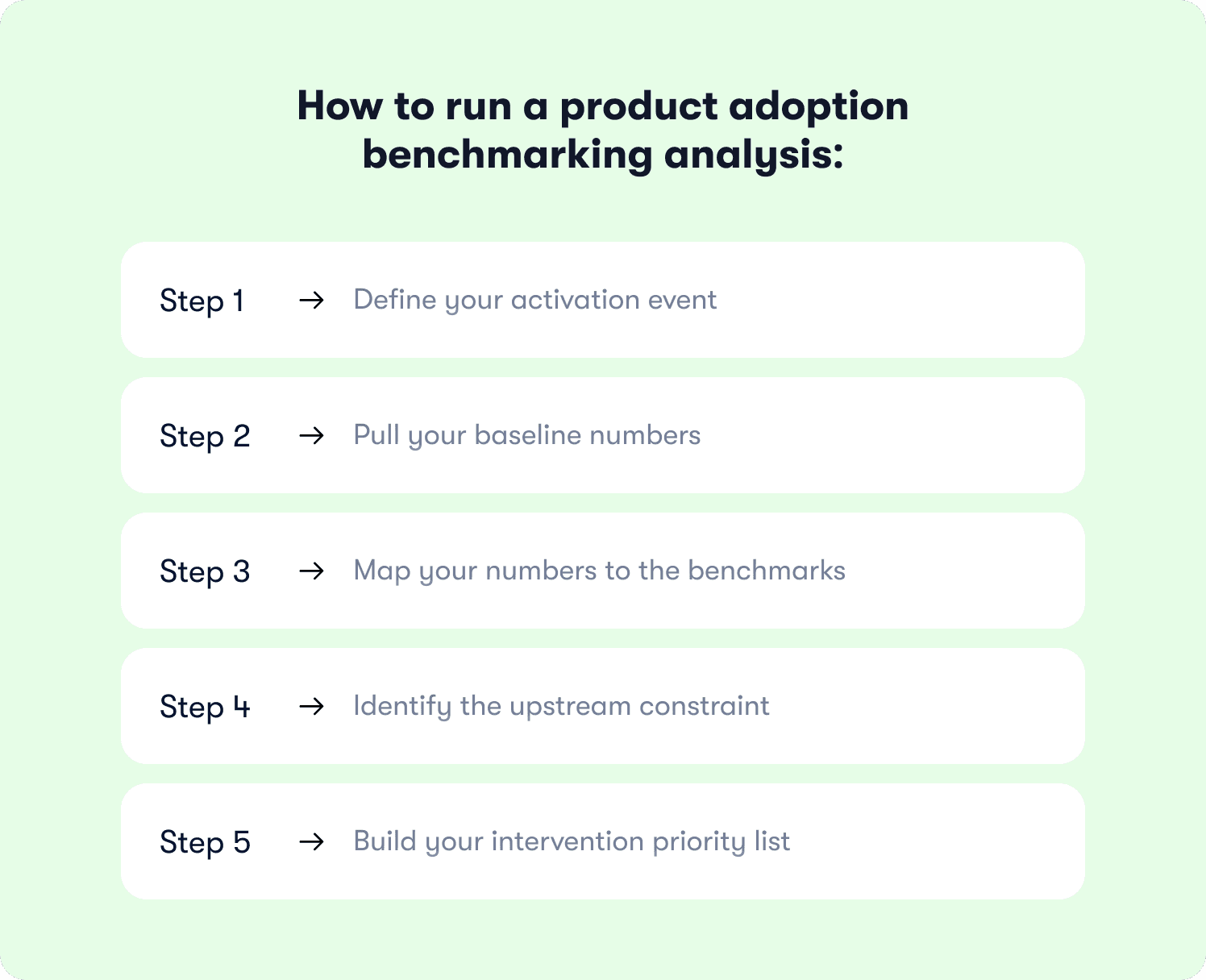

How to run a product adoption benchmarking analysis: a 5-step process

Knowing the benchmarks is step one. The actual work is comparing them against your own data in a way that produces a prioritized action list, not just a score. Here's how to do it.

Step 1: Define your activation event (if you haven't already)

You can't benchmark an activation rate you haven't defined. Before you pull any numbers, you need a specific, measurable action that represents the moment a user first extracts real value from your product.

Not "completed profile." Not "finished the onboarding tour." The actual behavior that, in your retention data, separates users who stay from users who churn.

For a project management tool: created a project, assigned a task, and invited a teammate

For a reporting tool: exported a report with live data

For a CRM: logged a contact and sent a first follow-up

If you're not sure which action is your real activation event, our guide to measuring product adoption has a step-by-step process for validating it against your retention data before you commit.

Step 2: Pull your baseline numbers

Calculate each of the five metrics above for your last two full cohort periods — typically the last 60 to 90 days, segmented by plan tier and acquisition channel.

Why segment? Because an overall activation rate of 38% can mask a paid-channel cohort converting at 61% and a self-serve cohort stuck at 19%. The aggregate looks fine. The self-serve segment is quietly hemorrhaging.

At minimum, pull:

Activation rate by cohort (7-day window)

Median time-to-value in days

% of users who engaged with 3+ core features in 30 days

Tour/checklist completion rate for your primary onboarding flow

30-day retention by cohort

Step 3: Map your numbers to the benchmarks

Put your metrics next to the industry benchmarks from the section above. Flag anything that falls below the industry median as a priority gap. Flag anything below the bottom-quartile threshold as a critical gap.

Two things to resist here:

Don't average across segments to make a gap disappear. A 19% self-serve activation rate is a 19% self-serve activation rate, regardless of what the enterprise segment is doing.

Don't treat beating the median as the finish line. If you're at 38% activation and the best PLG companies run at 40–50%, you're competitive but not compounding.

Step 4: Identify the upstream constraint

Gaps rarely exist in isolation. A low 30-day retention rate almost always traces back to a TTV problem. A TTV problem usually traces back to a specific drop-off step in the activation funnel. A low feature adoption depth usually reflects a discovery failure in the first session, not a product quality issue.

Work backwards from your lowest-performing metric to find the upstream constraint. That's the gap worth fixing first — because addressing it will move multiple metrics downstream, not just one.

Step 5: Build your intervention priority list

For each critical gap, identify the specific intervention type and target segment. Be precise:

"Activation rate below median for self-serve, free-plan users" is actionable. "Activation is low" is not.

"TTV exceeds 7 days for users who skip the integration setup step" is actionable. "Onboarding is too slow" is not.

Once you have precise gap definitions, you have a ranked list of where to focus and which segments to target first. That list is the output of a benchmarking analysis. Not a score — a sequenced action plan.

What to do when your benchmarks reveal a gap

Finding out your activation rate is in the bottom quartile isn't a crisis. It's information. The product teams that stay stuck are the ones who treat a benchmark gap as a verdict on the product rather than a specific problem with a specific fix.

Each gap type has a corresponding intervention. Here's how to match them.

Activation rate below the median: rebuild the path to value

If activation is below 30%, the problem is almost never the product. It's the distance between signing up and experiencing the thing the product was built to do. Users hit friction, get confused, or simply don't know what to do next — and they leave before finding out whether your product would have solved their problem.

The fix is structural, not cosmetic. More tooltips won't help if the onboarding path itself routes users away from the activation event.

Replace passive tours (click Next, click Next, click Done) with action-based flows that require users to complete real tasks before advancing. Users who learn by doing activate at meaningfully higher rates than users who learn by watching.

Map your onboarding checklist items directly to the activation event, not to setup tasks. "Add a profile photo" does not move activation. "Create your first project and invite a teammate" does.

AB Tasty replaced their development-dependent feature launch process with Jimo's action-based tours and reached 2,000 users in week one of their first campaign — with their first flow built in 90 minutes.

Time-to-value too slow: find and fix the stall point

A TTV problem almost always lives at one specific step. Users aren't stalling everywhere — they're stalling at the moment the product asks them to do something they don't know how to do yet, or don't see the point of doing.

The fastest way to find it: look at your onboarding funnel and find the step with the largest drop between started and completed. That gap is your TTV problem in disguise.

Once you've found it:

Deploy a behavior-triggered contextual hint at that exact step — not a tour launched on page load, but guidance that fires when a user has been sitting on a screen for more than 30 seconds without taking action

Remove any setup steps that don't connect directly to the activation event. Each additional step that isn't required adds drop-off risk and pushes first value further away

If the stall is at an integration step, consider building a dedicated walkthrough that handles the full configuration flow — Zenchef cut their onboarding time from 30 days to 14 days by deploying guided flows at exactly the friction points their funnel revealed

Feature adoption depth below 20%: it's a discovery problem, not a product problem

When fewer than one in five active users explores more than a single feature, the instinct is to assume the other features aren't valuable enough. Usually, users don't know they exist — or they know they exist and don't understand why they're relevant to them.

Trigger in-app announcements to users whose behavior signals they'd benefit from a specific feature — not a broadcast to everyone, but a targeted nudge based on what the user has and hasn't done

Use Jimo's Success Tracker to identify which features correlate most strongly with 30-day retention in your own cohort data, then prioritize discovery efforts around those specifically — not the features that get the most clicks, but the ones that predict whether users stay

Customer Alliance saw a 970% spike in feature adoption after deploying targeted in-app announcements to the right user segments at the right moment

Tour completion below 50%: the tour is the problem

A tour completion rate below 50% on a short flow isn't a user attention problem. It's a tour design problem. Users are dropping off because the tour is triggering at the wrong moment, asking for too much, or advancing through steps that don't feel relevant to what they're trying to do.

Three things to check before rebuilding from scratch:

Trigger timing: Tours launched on first login interrupt users before they have context. Behavior-triggered tours — fired after a user has spent time on a page or attempted an action — land with significantly higher completion

Step count: Industry benchmarks suggest 3-step tours average 72% completion. Every additional step beyond that costs you drop-off. If your tour has 8 steps, it has 5 too many

Action vs. instruction: Tours that tell users what to do retain attention less than tours that make users do the thing. If a user can advance by clicking Next without performing the actual task, most of them will and you'll have a 74% completion rate and a flat activation curve to show for it.

See where your product adoption metrics actually stand

You now have the benchmarks. You have the process for running the analysis. What you do with that next depends entirely on how far your numbers are from where they need to be.

For most product teams, the benchmarking analysis surfaces one uncomfortable truth: the metric they've been optimizing isn't the one holding back growth. Activation is the constraint, not retention. TTV is the constraint, not feature adoption depth. The dashboard looked fine because there was no external frame to compare it against.

That's the part benchmarking fixes. What it doesn't fix on its own is the gap.

Closing the gap requires two things most teams don't have in the same place: visibility into which specific behaviors predict retention in their own cohort data, and the ability to act on that insight inside the product without waiting on a sprint. Jimo's retention insights show you which behavioral milestones correlate with renewal in your product specifically — not just industry averages, but your users, your cohorts, your numbers. And actionable reports connect every guidance intervention directly to the downstream activation and retention outcomes it produced, so you're not defending engagement metrics to leadership but showing them which tours moved conversion and by how much.

If your benchmarking analysis has surfaced a gap worth closing, see how Jimo works.

FAQs

What is product adoption benchmarking analysis?

A benchmarking analysis is a systematic process of comparing your company's performance against industry standards, industry leaders, or similar organizations to identify performance gaps and opportunities for improvement. In the context of SaaS product adoption, it means comparing your specific metrics (activation rate, time-to-value, feature adoption depth) against industry benchmarks rather than only tracking internal data. Without that external reference point, you're measuring progress without knowing whether your current performance is competitive or falling behind market conditions your users are already aware of.

What is a good activation rate for SaaS?

Industry benchmarks from a study of 500+ SaaS products put the average activation rate at 36% and the median at 30%. The best product-led growth companies run at 40–50%. If your activation rate is below 30%, you're in the bottom quartile. If it's above 40%, you're performing at the level of the strongest PLG companies. Where "good" lands for your specific product depends on your business model, trial length, and acquisition mix, but below 25% is a critical performance gap regardless of category.

How often should you benchmark your product adoption metrics?

A full benchmarking analysis is worth running quarterly, which leaves enough time for interventions to take effect and for cohort data to be meaningful. The five underlying metrics (activation rate, TTV, feature adoption depth, tour completion, and 30-day retention) should be reviewed weekly, not as benchmarking exercises but as operational signals. The benchmark comparison gives you the frame. The weekly review tells you whether you're moving inside it.

What types of benchmarking are relevant for SaaS product teams?

Three types of benchmarking apply directly to product adoption. Competitive benchmarking compares your key performance metrics against industry leaders and companies in the same industry to understand your market position. Internal benchmarking compares performance across cohorts, user segments, or business units within your own product, which is useful for identifying which acquisition channels or plan tiers produce the strongest activation. Process benchmarking examines your onboarding and activation workflows against industry best practices to identify where your own processes introduce friction that similar organizations have already solved. Strategic benchmarking is less common for product teams but relevant when evaluating whether your overall business strategy around product-led growth is aligned with how industry leaders in your category operate.

What's the difference between product adoption benchmarking and measuring product adoption?

Measuring product adoption tells you what's happening inside your product — your activation rate, your funnel drop-off points, your cohort retention curves. Benchmarking tells you whether those numbers are competitive. You need both: measurement gives you the data, benchmarking gives you the context to know which data points are actual problems and which are acceptable baselines for your category.

What are the most important performance metrics to benchmark for product adoption?

The five key performance indicators worth benchmarking are activation rate, time-to-value, feature adoption depth, tour completion rate, and 30-day cohort retention. These are the relevant metrics that correlate with retention and revenue outcomes, not vanity signals like login frequency or session counts. When collecting data for a benchmark comparison, pull each metric segmented by plan tier and acquisition channel rather than as overall performance numbers. Aggregate figures hide the performance gaps that matter most and make it impossible to implement strategies that target the right segments.

How can benchmarking improve product performance?

Benchmarking changes the prioritization conversation. Without external reference points, product teams tend to optimize whatever moved last quarter — regardless of whether it was the actual constraint on growth. Benchmarking reveals which metric is genuinely below the market standard, which focuses resources on the gap most likely to affect revenue. A 25% improvement in activation rate correlates with a 34% increase in revenue according to FairMarkit's analysis, a relationship consistent with the downstream compounding effect of activation on conversion and retention.

What is the difference between internal and external benchmarking for product teams?

Internal benchmarking compares your own performance across time periods, user segments, or cohorts. For example, tracking whether activation improved quarter-over-quarter after an onboarding redesign, or comparing performance metrics between self-serve and sales-assisted users within the same organization. External benchmarking compares your performance against industry data, competitive analysis, and standards set by industry associations and research bodies. Both matter. Internal benchmarking tells you whether your current processes are improving. External benchmarking tells you whether they're improving fast enough relative to industry practices and what competitors are achieving. Using only internal data creates the illusion of continuous improvement while the competitive gap widens.

Does product adoption benchmarking work for early-stage SaaS products?

Benchmarking is most useful once you have at least two to three months of cohort data and a defined activation event. Before that, the sample sizes are too small for the comparison to be reliable. Early-stage teams are better served by validating their activation event through cohort analysis first confirming which behavior actually predicts retention in their own data before using industry benchmarks as a reference point. Benchmarking against the wrong event produces misleading gaps and misdirects intervention efforts.

How can benchmarking improve product performance and provide a competitive advantage?

Benchmarking surfaces the specific performance gaps between your current performance and what industry leaders achieve, which focuses improvement efforts on the constraints most likely to affect competitive edge rather than spreading resources across every metric at once. It also prevents the common trap of optimizing toward industry averages when best practices in your category have moved significantly beyond them. For product teams, the competitive advantage from benchmarking comes not from the comparison itself but from the speed at which you identify gaps, implement strategies to close them, and validate with cohort data whether those strategies produced the expected lift. Teams that run this cycle quarterly outpace those who benchmark once a year and spend the intervening time collecting data without acting on it.