TL;DR

Most B2B SaaS teams running a product-led growth (PLG) or hybrid motion don't have a product adoption strategy. They have an adoption backlog: a collection of onboarding flows, re-engagement emails, and CS playbooks built independently, measured against different definitions of success, and guaranteed to break every time a feature ships, a team restructures, or a new PM inherits the account. This article is not about what product adoption is, how to measure it, or which in-product tactics move activation rates. Those are covered in depth elsewhere on the Jimo blog. This article covers the organizational layer that makes all of it work: how Senior PMs at scaling B2B SaaS companies build a documented adoption operating model that assigns clear ownership across product, customer success, and growth, sequences interventions by behavioral signal rather than time-based schedule, and creates a review cadence that keeps the system calibrated to revenue outcomes rather than activity metrics. Walk away with the four strategic decisions every scalable adoption strategy requires, a five-question readiness assessment you can run in two minutes, and a one-sprint blueprint for turning your team's current adoption backlog into a system that compounds.

Your activation rate hasn't moved in two quarters. Product shipped a new onboarding checklist in January. Growth ran an A/B test on the welcome email in February. Customer success added a 14-day check-in to their playbook in March. Each initiative was reasonable. None of them compounded, because none of them were connected to each other.

That's a strategy problem, and the fix is different from what most teams reach for first.

Execution problems get solved with better tactics: a more targeted tooltip, a shorter checklist, a faster path to the activation event. Strategy problems get solved by answering the questions your team currently answers inconsistently, in different meetings, using different metrics. Who owns activation? What behavioral signal triggers the handoff from product-led to CS-assisted? Are your interventions firing because a user did something, or because seven days passed since signup?

This article is written for Senior PMs who already understand what adoption is and how to measure it, and who are ready to move from running adoption initiatives to operating an adoption system. If you need the metrics foundation first, how to measure product adoption covers that ground. If you need the tactical execution layer, how to increase product adoption has the intervention playbook in full. What follows is the strategy layer that holds all of it together.

The Difference Between Adoption Tactics and an Adoption Strategy

Ask any PM what their product adoption strategy is and most will describe what their team does: a product tour on signup, a checklist during onboarding, a CS check-in at day 14. Those are tactics. They are useful, and they are not a strategy.

The distinction matters because tactics and strategies fail in different ways. A tactic that isn't working is a conversion rate problem. A strategy that isn't working is an organizational problem — and it keeps regenerating broken tactics no matter how many you fix.

What a tactics-only team looks like in practice

Picture the Monday morning adoption review at a company running on tactics. Product reports that the new onboarding checklist reached 74% completion last week. Growth shares that the reactivation email sequence is performing above benchmark on open rates. Customer success flags three accounts at churn risk based on health scores that dropped over the weekend. Each team has their number. Nobody in the room is looking at the same metric, and nobody owns the thread that connects all three reports to the same revenue outcome: whether users are actually adopting the product.

The conversation ends with action items spread across three teams. Product will tweak the checklist. Growth will A/B test subject lines. CS will schedule calls. Two weeks later, the same meeting happens again.

What a strategy-led team looks like instead

Now picture the same meeting at a company running on a documented adoption strategy. Product, growth, and CS are looking at a shared funnel analysis view that tracks users from signup through their defined activation rate milestone and into second-week retention. Everyone agreed on what "activated" means six weeks ago, so the metric means the same thing to all three teams. When activation drops in a cohort, the room knows whose stage owns the intervention and what the trigger protocol says.

The meeting ends with one owner, one decision, and one metric to move.

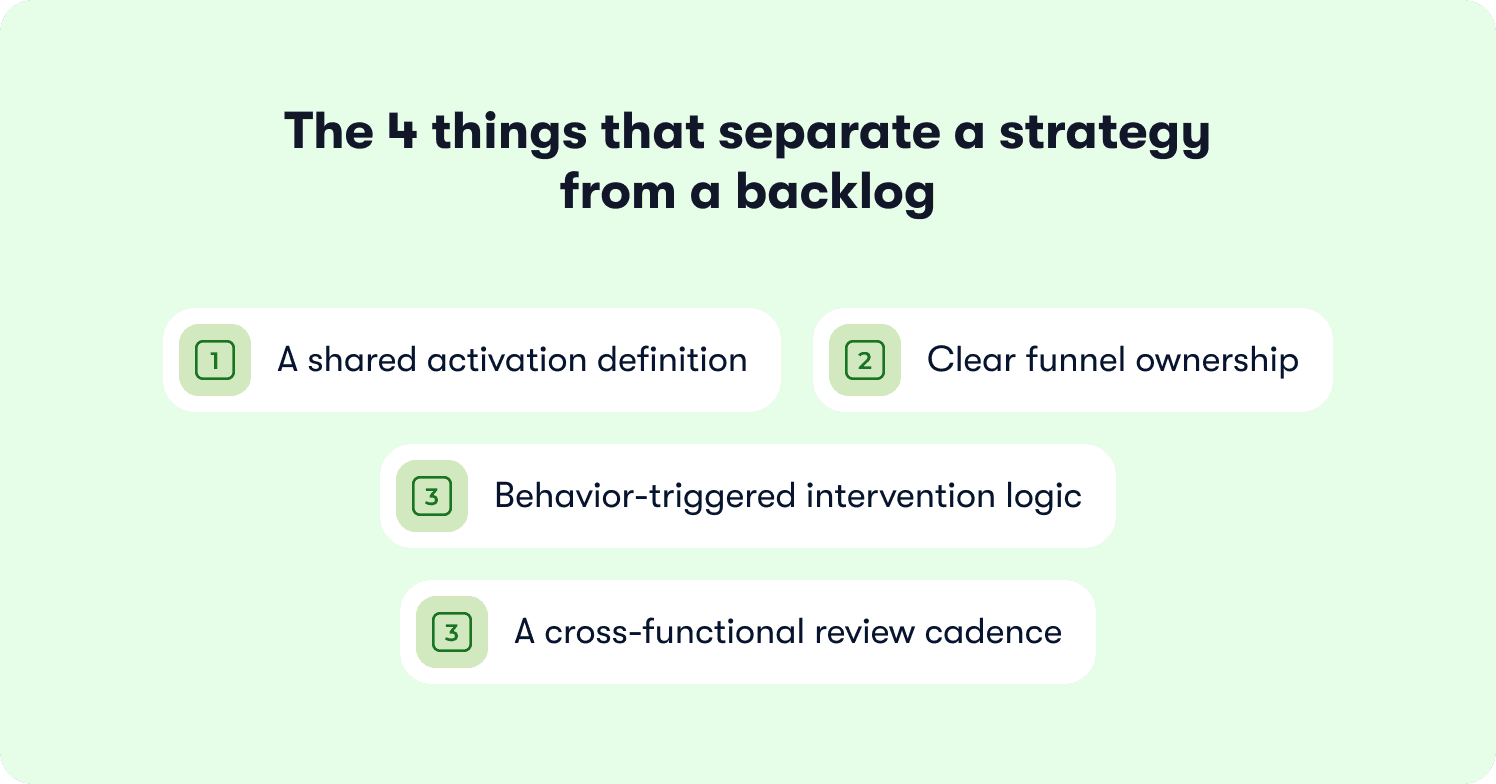

The four things that separate a strategy from a backlog

A product adoption strategy is a documented operating model with four components that a backlog of tactics will never have on its own:

A shared activation definition. One behavioral event, agreed on across product, CS, and growth, that defines what "adopted" means for your primary user segment. Without this, every team is optimizing for a different target.

Clear funnel ownership. A named owner at each stage of the product adoption lifecycle, with documented handoff protocols between teams — not just informal norms that shift when someone leaves.

Behavior-triggered intervention logic. Guidance that fires when a user does or doesn't do something specific, rather than on a time-based schedule that treats a day-one user and a day-seven user identically.

A cross-functional review cadence. A regular meeting where outcome metrics like time-to-value, trial-to-paid conversion, and feature discovery depth, are reviewed by all three teams together, not in separate standups.

None of these components require a new tool. They require decisions that most teams have never formally made.

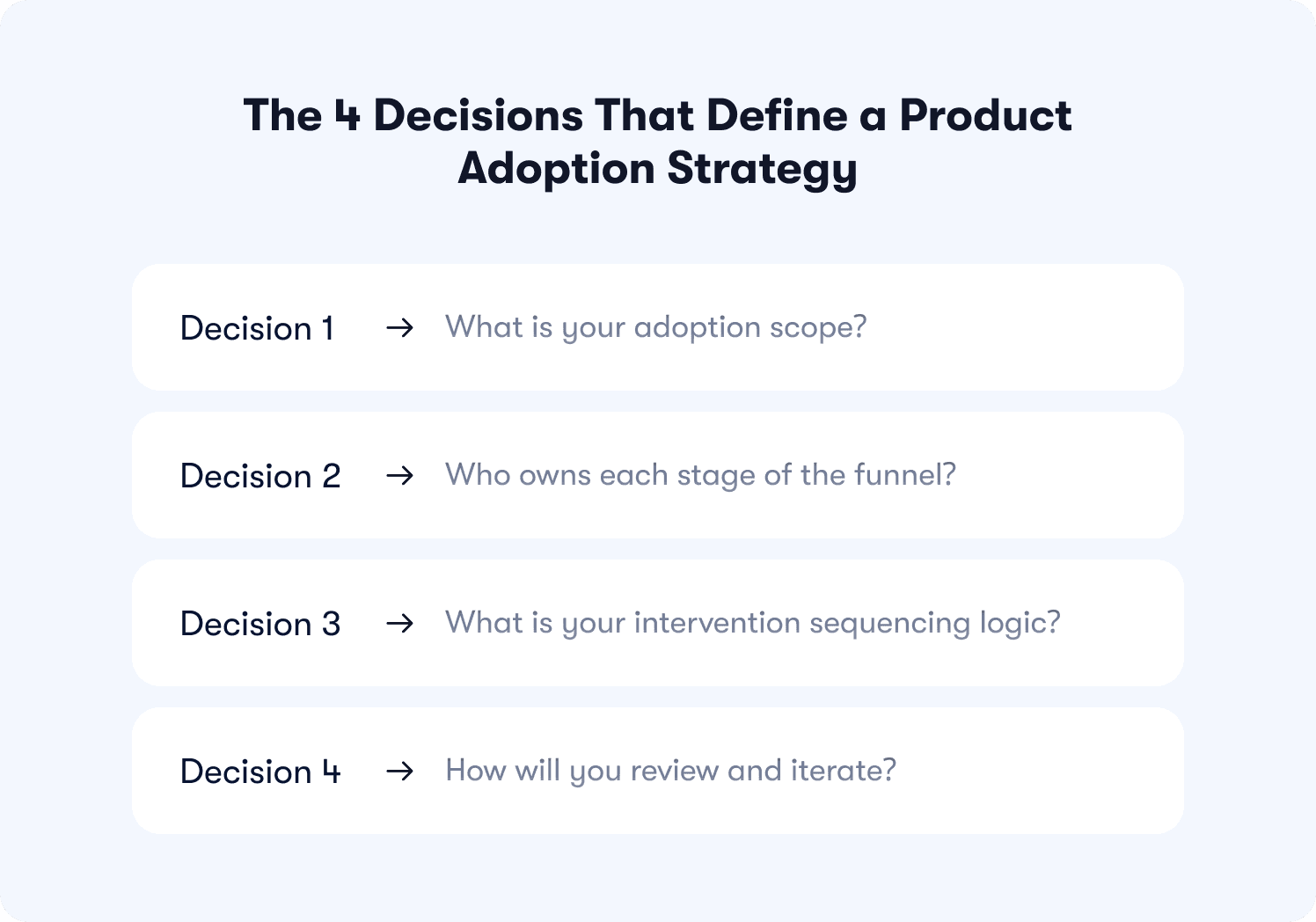

The Four Decisions That Define a Product Adoption Strategy

A scalable product adoption strategy is built on four strategic decisions, not a list of tactics. Each one corresponds to a choice the PM team needs to make explicitly and document because leaving any of them implicit is what turns a strategy into a backlog.

Decision 1: What is your adoption scope?

Before any intervention gets built, the team needs to agree on which users and which behaviors are in scope. Most teams skip this step and end up building a strategy that tries to serve three fundamentally different user states at once:

New trial users in their first 14 days who have never reached an activation event

Existing paid users who activated once but have not deepened their feature adoption beyond a single workflow

Dormant accounts who activated months ago and have since disengaged

Each of these cohorts has a different bottleneck, requires a different intervention type, and belongs to a different functional owner. A strategy that conflates them produces guidance that is too generic to move any of them. Adoption rates across all three cohorts stay flat while the team generates a healthy volume of launches.

Scoping your adoption strategy means naming your primary cohort explicitly for a given period. The goal is not to ignore the others; it is to avoid optimizing for all three simultaneously with the same playbook. Define scope, then move to the next decision.

Decision 2: Who owns each stage of the funnel?

Ownership is the most skipped decision in product adoption strategy, and its absence is the most common reason adoption programs stall at Series B scale. When a user falls between two stages, the question of who acts becomes a negotiation rather than a protocol.

The product adoption lifecycle has distinct stages, each with a natural functional owner:

Awareness → Marketing. Positioning accuracy filters trial cohort quality before a single in-product moment fires. A user who arrives with a misaligned expectation of what your product does will churn before any guidance reaches them.

Activation → Product. In-product guidance is the primary lever at this stage. No email reaches a user at the exact moment they encounter friction inside the product. CS involvement at this stage is expensive and doesn't scale, but the right tool here is behavior-triggered in-product guidance, deployed without engineering dependencies.

Adoption → Product. In-product nudges handle the full user population at this stage automatically, firing the moment a behavioral signal indicates a stall and not on a calendar schedule. CS does not co-own the intervention layer here. The in-product nudge fires without CS involvement. CS is only triggered when a documented behavioral threshold signals that the product-led approach has not moved the account within a defined window and a human conversation is the right next step.

Retention → CS with product instrumentation. CS uses product behavioral data to identify risk. Product updates the guidance that surfaces at high-risk moments. Neither team can do their job well here without the other's input, which makes this the stage most dependent on a documented handoff protocol.

Expansion → CS with product-qualified signals. The product identifies expansion-ready accounts using product-qualified lead signals like usage thresholds, feature adoption depth, and multi-seat behavior. CS closes the conversation. The sequence matters: CS engagement before the product signal fires is premature; CS engagement after the product has had its window to convert and has not done so is the correct trigger for human involvement.

Documenting ownership is straightforward. The harder part is defining the handoff protocol: the specific behavioral signal that triggers the handoff, who receives it, and what the response expectation is. Without that protocol in writing, the handoff defaults to whichever team notices the problem first — which is usually CS, usually too late.

Crossbeam's team achieved a 3x lift in click-through rates on action-driving banners by tightening exactly this kind of product-to-user connection at the activation stage. The result came from the product layer doing its job and reaching users at the right moment so CS could focus on the accounts where a human conversation would move the needle.

Decision 3: What is your intervention sequencing logic?

Most adoption teams build a flat intervention stack: a product tour on day one, a checklist on day two, an email on day three, a CS check-in on day seven. The sequence is a calendar, not a strategy. It reflects when resources were available to build each piece, not when users need each piece.

A sequencing logic grounded in strategy asks a different question: at each moment in the user journey, what does a user who is about to churn look like, and what intervention gives them the best chance of continuing?

The answer will almost never be the same for two users in different behavioral states. A user who has not completed the activation event by day five needs different guidance than a user who completed activation on day two but has not returned to the product since. Both are at risk of churning. In a behavior-triggered model, they receive completely different interventions because they are at completely different stages of the progressive onboarding journey.

Documenting your sequencing logic means writing down, for each funnel stage, the specific behavioral condition that triggers an intervention. That document is not a Gantt chart. It is closer to a decision tree: if the user does X, fire Y; if the user does not do X within Z days, fire W instead. When that logic lives in a document that all three teams have agreed on, it can be implemented consistently, tested systematically, and updated without starting from scratch every quarter.

AB Tasty deployed this kind of deliberate, behavior-informed guidance execution using Jimo and reached 2,000 users in week one of a new feature release with a 6x faster launch cycle than their previous approach. That speed is only possible when the sequencing logic is already decided and the execution layer doesn't require an engineering sprint to adjust it.

Decision 4: How will you review and iterate?

An adoption strategy without a review cadence is a document. A review cadence without the right structure is a status update. Neither one closes the loop between what the team ships and whether it moves a revenue metric.

The review model that works for scaling B2B SaaS teams has two tiers:

Weekly tactical review. The PM reviews behavioral signal data at the intervention level. Which flows are completing? Which stages are dropping? Where is the sequencing logic producing the wrong outcome for a specific cohort? This is a product-owned review, and it drives tactical adjustments: a flow gets resequenced, a trigger condition gets narrowed, a step gets removed because it's adding friction rather than reducing it. The metric here is the lag measure — completion rates, step drop-offs, time through the funnel — used as a diagnostic signal, not a success metric reported upward.

Monthly cross-functional strategy review. Product, CS, and growth come together to review outcome metrics: activation rate movement, feature adoption depth, trial-to-paid conversion by cohort. This is where strategy decisions get made. Has the primary bottleneck shifted from activation to retention? Does the ownership model still hold given a team restructure? Has the activation event definition drifted from what the data says predicts retention? These are not tactical questions. They are the questions that determine whether the strategy itself is working, and they can only be answered when all three teams are looking at the same metrics in the same room.

The monthly review is what most teams don't have. Skipping it means the weekly tactical reviews optimize in isolation, each team improving their own metric while the overall adoption rate drifts. The strategy is still technically in place. Nobody notices it has stopped working until a cohort retention number appears in a board deck.

The Adoption Strategy Readiness Assessment

Most teams assume they have an adoption strategy because they have adoption activity. The two are not the same. This assessment takes two minutes and tells you exactly where the gaps are before you spend another quarter optimizing in the wrong direction.

Answer each question honestly. A "no" is not a failure — it is a starting point.

Question 1: Can you write your activation event in one sentence?

Not a feature. Not a stage. A single, specific behavioral event that your team has agreed predicts whether a new user will retain past 30 days.

If three people on your team write down their answer right now and produce three different sentences, you do not have a shared activation definition. You have three separate teams optimizing for three separate targets and calling it a strategy. The activation rate metric is only meaningful when everyone on the team is measuring the same behavior.

What "yes" looks like: A written, one-sentence activation event that product, CS, and growth have all reviewed and agreed on in the last 90 days. Something like: "User creates and publishes their first workflow within seven days of signup."

What "no" looks like: The activation event is described differently depending on who you ask, or it has not been revisited since the product last made a significant change to its core workflow.

Question 2: Does every stage of your funnel have a named owner and a written handoff protocol?

Ownership without a handoff protocol is not ownership. It is intent. The handoff protocol is the specific behavioral signal that moves an account from one team's responsibility to another's, and it needs to be written down, not assumed.

Ask your CS team what triggers them to reach out to a new trial account. Then ask your growth team what triggers an automated re-engagement flow for the same account. If the answers overlap, you have two teams acting on the same user at the same time based on different signals. If the answers contradict each other, you have a gap where nobody acts at all.

What "yes" looks like: A shared document, even a simple one, that names the owner at each funnel stage and describes the behavioral condition that triggers each team handoff.

What "no" looks like: Handoffs happen informally, based on whoever notices a problem first, or CS reaches out on a fixed calendar schedule regardless of what the product data shows.

Question 3: Are your interventions triggered by behavioral signals or by a time-based schedule?

Time-based sequences feel organized. They are also blind to what users are actually doing. A user who completed the activation event on day two and a user who has not logged in since day one will receive the same day-seven email in a time-based system. One of those users needs encouragement. The other needs a completely different conversation.

Behavior-triggered intervention logic fires based on what a user did or did not do. It requires knowing your activation event (Question 1), which is why this question comes after it.

What "yes" looks like: Your primary onboarding interventions fire on behavioral conditions. The trigger is a user action, or the absence of one within a defined window, not a calendar date.

What "no" looks like: Your onboarding sequence runs on day one, day three, day seven, and day 14 regardless of what any individual user has done between those dates.

Question 4: Does your team review outcome metrics, not activity metrics, in a cross-functional meeting at least once a month?

Completion rates, email open rates, and tooltip click-throughs are activity metrics. They tell you that things happened. Trial-to-paid conversion by cohort, time-to-value by segment, and feature discovery depth at 30 days are outcome metrics. They tell you whether your adoption system is working.

The monthly cross-functional review is where product, CS, and growth sit in the same room looking at outcome metrics together. Without it, each team improves their own activity number while the shared outcome metric drifts.

What "yes" looks like: A recurring monthly meeting where all three teams review the same adoption outcome metrics, make strategy-level decisions, and document what changed and why.

What "no" looks like: Adoption performance is reviewed separately by each team, or the shared meeting focuses on activity metrics and updates rather than outcome-level decisions.

Question 5: Is your adoption operating model written down and current?

A strategy that exists only in people's heads is one resignation letter away from disappearing. When a new PM joins, when CS restructures, when a feature ships that changes the activation flow, an undocumented strategy requires a re-education process that burns weeks and reintroduces the coordination problems the strategy was built to solve.

What "yes" looks like: A living document — updated in the last 90 days — that captures the activation event definition, funnel ownership map, intervention sequencing logic, and review cadence. All three teams have seen it.

What "no" looks like: The adoption operating model lives in one person's Notion page, in an onboarding deck from last year, or entirely in institutional memory.

How to read your results

If you answered "yes" to all five, your team is operating a strategy. The next investment is in iteration speed: how fast can you move from a behavioral signal to a live intervention?

If you answered "no" to one or two questions, you have a working foundation with specific gaps. Address the "no" answers in priority order: activation definition first, then ownership, then sequencing logic, then review cadence, then documentation.

If you answered "no" to three or more questions, your team is running tactics. That is a recoverable position, and the next section gives you the build path.

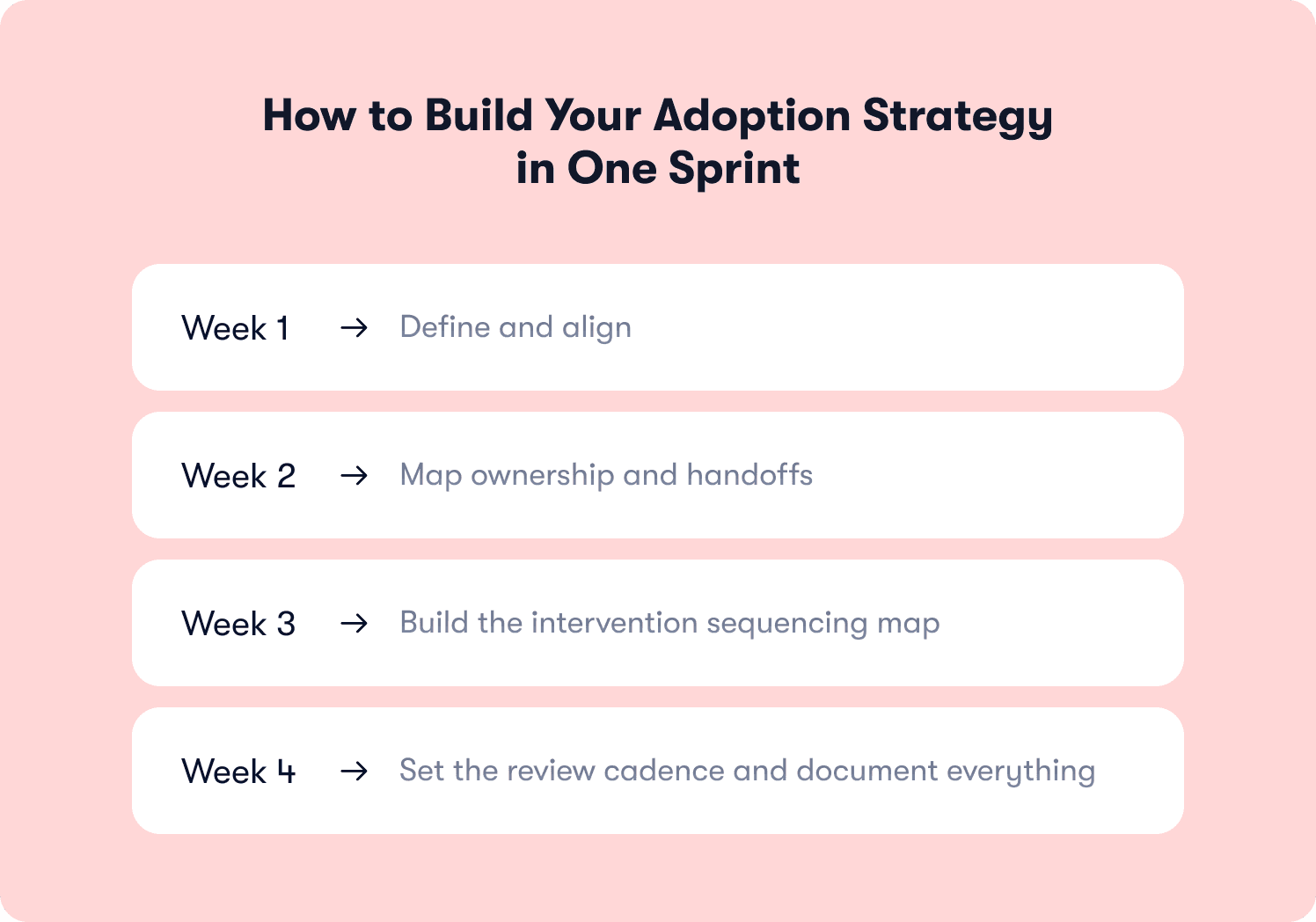

How to Build Your Adoption Strategy in One Sprint

The goal of this sprint is four outputs: a written activation event definition, a funnel ownership map, a trigger-based intervention map, and a review cadence document. And all of them require organizational alignment to be worth anything.

Here is how to produce each one without turning it into a six-week project.

Week 1: Define and align

The first week has one job: get product, CS, and growth into a room and produce a written activation event definition that everyone agrees on.

Start with your behavioral data. Pull retention curves segmented by early product actions. The behavior that most strongly separates users who retained past 30 days from users who did not is your activation event candidate. If your data is not yet mature enough to run that correlation, use the proxy: what is the action after which a user first tells you the product is working? That is usually the closest thing to a value moment your team can name right now.

Write the definition in one sentence. It should name a specific action, a specific timeframe, and a specific user segment if relevant. Bring that sentence to the alignment meeting. Expect disagreement. The disagreement is productive as it surfaces the places where each team has been optimizing for a different target. The meeting ends when all three teams have signed off on the same sentence.

One output. One meeting. One week.

Week 2: Map ownership and handoffs

With the activation event defined, the second week maps who owns what and what triggers movement between owners.

Use the funnel stage ownership model from Decision 2 of this article as the starting template. For each stage, assign a named team. Then document the handoff: what behavioral signal moves an account from product ownership to CS ownership, and what is the expected response time when that signal fires?

The handoff protocol does not need to be elaborate. A table with three columns covers it: stage, owning team, trigger condition. The goal is that when a trial account stalls at activation, nobody has to ask whose job it is to act. The protocol already answered that question.

A secondary output of this week: a quick audit of your current interventions against the ownership map. Which interventions have no clear owner? Which stages have overlapping ownership that creates conflicting guidance? Surface the conflicts now rather than discovering them in a user's inbox six weeks from now.

💡 The critical rule: in the Adoption stage, the in-product nudge fires automatically without CS involvement. CS is only triggered when the product has had its defined window and the account has not converted.

Week 3: Build the intervention sequencing map

The third week translates the ownership map into a trigger-based sequencing document. For each funnel stage, write down the behavioral conditions that fire each intervention. The format should resemble a decision tree more than a Gantt chart.

For the activation stage, the logic might read: if a user has not completed the activation event within five days of signup, trigger an in-product checklist focused on the single action most predictive of activation. If the user completes the activation event within three days, suppress the checklist and surface a secondary workflow introduction instead. The conditions are behavioral. The interventions are specific. The logic is written down.

At this stage, the tool question becomes relevant. Behavior-triggered sequencing at this level of specificity requires a platform that can act on real user events, not calendar dates. Tools like Jimo let PMs configure and deploy this logic directly, without engineering involvement, which means the sequencing map you write in week three can be live in the same sprint. The strategy does not wait for a developer queue to catch up.

For teams newer to behavior-triggered execution, interactive onboarding strategies covers the implementation mechanics in detail.

Week 4: Set the review cadence and document everything

The final week has two outputs: a review cadence document and a complete adoption strategy one-pager that captures the work from weeks one through three.

The review cadence document is short. It names the weekly tactical reviewer, the monthly cross-functional meeting participants, the metrics reviewed at each cadence level, and the decision rights each team holds. Decision rights matter because without them, the monthly meeting becomes a status update rather than a strategy session. Product needs the authority to reassign funnel ownership when the data says a stage is mis-owned. CS needs the authority to flag when the handoff protocol is not working in practice. Growth needs the authority to challenge the activation event definition when their cohort data tells a different story.

The adoption strategy one-pager pulls together all four outputs into a single document. It does not need to be long. It needs to be current, shared, and revisited at the monthly review. A strategy that exists in one person's head survives exactly as long as that person stays in the role. A strategy that lives in a shared document survives team changes, product pivots, and leadership reviews.

At the end of the sprint, your team has something most B2B SaaS companies at Series A and Series B do not: a written adoption operating model with real owners, behavior-based logic, and a cadence that keeps it honest. The tactics your team was already running become more effective immediately, because they are now connected to a shared definition of success rather than operating as independent initiatives pointing at different targets.

📖 You have the strategy framework. Now you need the execution layer. Our playbook covers 19 tactics that map the exact funnel stage and behavioral signals to address so you can plug them directly into the sequencing logic you just built → Read our free guide

What a Scalable Product Adoption Strategy Looks Like in Practice

The four decisions and the one-sprint build path are useful in the abstract. They are more useful when you can see how they connect in a real operating context.

Consider a project management SaaS team at Series B. Their product has solid acquisition: 600 trial signups per month, a CAC that leadership is comfortable with, and a sales-assisted motion for accounts above a certain seat threshold. Their problem is familiar. Trial-to-paid conversion sits at 19%. Day-30 retention for converted accounts is declining quarter over quarter. The CS team is stretched because they are intervening manually in accounts that should be self-serving through the product.

The team has adoption activity. They have a product tour, a three-step checklist, a day-seven email, and a CS outreach template. What they do not have is a strategy.

Where the diagnosis begins

The PM runs the readiness assessment and finds three "no" answers. The activation event is described differently by product ("user creates their first project"), CS ("account has at least two active members"), and growth ("user completes the setup checklist"). The three definitions are not wrong. They are measuring three different things, and none of them has been validated against retention data.

The team pulls cohort data. Users who created a project and invited at least one teammate within five days retained at 61% at day 30. Users who completed the checklist but did not invite a teammate retained at 23%. The checklist completion rate had been reported as a success metric for two quarters. The data says it was measuring the wrong behavior the entire time.

The activation event gets redefined: "User creates a project and receives a response from an invited teammate within five days of signup." One sentence. Three teams aligned.

Your Adoption Backlog Has an Expiration Date

There is a point in every scaling B2B SaaS company where the informal coordination that held the adoption program together stops working. A PM leaves and takes the activation event definition with them. A feature ships and breaks the onboarding flow nobody had documented. CS and product spend three weeks debating whose job it was to catch an at-risk cohort that has already churned.

That point arrives faster than most teams expect. And when it does, the problem is never that the team lacked good tactics. It is that the tactics were never held together by a system.

The five questions in the readiness assessment are the fastest way to see where your system has gaps before they produce a churn event. The one-sprint build path closes those gaps with four outputs that most teams can produce in less time than they spent debating which tooltip to A/B test last quarter. And the four strategic decisions: scope, ownership, sequencing logic, and review cadence, are what turn a set of adoption initiatives into a program that gets more effective over time rather than resetting every time something changes.

Tactics produce results in isolation. A strategy produces a system that compounds them. At Series B, that compounding is what separates an adoption program that leadership trusts from one that generates weekly updates and quarterly explanations.

Jimo is built for the execution layer that strategy requires: behavior-triggered guidance, no-code iteration, and measurement that connects in-product moments to revenue outcomes. If you want to see what that looks like for your product, try Jimo for free and build your first behavior-triggered flow without writing a single line of code.

FAQs

How ownership and sequencing change the outcome

With the activation event defined, the ownership map reveals a gap. Nobody owned the "teammate invitation" behavior specifically. Product had built the invite flow. Growth had mentioned it in the welcome email. CS assumed users would figure it out. The invite step was covered by three teams and owned by none of them.

The team assigns activation ownership to product, with a specific handoff trigger: any account where the primary user has created a project but has not sent a teammate invite by day three moves to a CS-assisted nudge sequence. The product layer handles the majority of users with a behavior-triggered in-product prompt surfaced at the exact moment a user creates a project without adding a collaborator. CS handles the accounts where the behavioral signal indicates a higher-risk stall.

The intervention that previously fired on day seven for every user regardless of their state now fires on day three, only for users who have completed the project creation step but not the invite step. The guidance is specific because the trigger is specific. Users who have already invited a teammate never see it.

What the review cadence catches

Six weeks after the strategy goes live, the monthly cross-functional review surfaces something the weekly tactical reviews had not flagged. Trial-to-paid conversion is moving for individual users, but account-level retention is still flat. CS brings the data: in accounts with more than five seats, the activation event is being reached by the champion user but not by the broader team. The strategy defined activation at the user level. The retention problem is at the account level.

The activation event gets a second condition added: "at least three team members have each created a project and received a response from an invited teammate within 14 days of account creation." The strategy updates. The sequencing map updates. The review cadence is what made the problem visible before it compounded into another quarter of flat retention.

This is what a scalable product adoption strategy produces that a backlog of tactics cannot: a system that surfaces its own failure modes before they show up as churn.

How is a product adoption strategy different from an onboarding strategy?

Onboarding is one stage of the adoption lifecycle — the period between signup and first value. An adoption strategy covers the full arc: from the first session through activation, feature discovery, habit formation, retention, and expansion. A team can have a well-designed onboarding flow and still have no adoption strategy if the post-activation stages have no owner, no measurement model, and no documented intervention logic. For Senior PMs building toward Series B scale, the onboarding layer is a necessary input to the adoption strategy, not a substitute for it.

What should a product adoption strategy include?

At minimum, a product adoption strategy document should contain four things: a written activation event definition agreed on by product, CS, and growth; a funnel ownership map with named owners and handoff protocols at each stage; a trigger-based intervention sequencing map that describes what behavioral conditions fire each intervention; and a review cadence that specifies who reviews which metrics, at what frequency, and with what decision rights. Teams that want to go further can layer in a cohort measurement framework connecting adoption milestones to revenue outcomes, but the four components above are sufficient to move a team from a backlog to a strategy.

How do you measure whether a product adoption strategy is working?

The right measurement layer tracks outcome metrics, not activity metrics. Outcome metrics include activation rate by cohort, time-to-value by user segment, feature adoption depth at 30 days, and trial-to-paid conversion. Activity metrics — completion rates, tooltip clicks, email open rates — are useful for diagnosing individual intervention performance but should not be used to evaluate whether the strategy itself is working. A strategy is working when outcome metrics move consistently in the right direction across multiple cohorts, not when a single tactic produces a spike in a single week.

Who should own the product adoption strategy at a Series A or Series B company?

Ownership of the strategy document typically sits with the Senior PM or Head of Product, because they are best positioned to hold the cross-functional coordination the strategy requires. But strategy ownership is not the same as execution ownership. The PM owns the document and the monthly review cadence. Product owns the activation stage and the in-product intervention layer. CS owns the retention and expansion stages, with product instrumentation feeding their signals. Growth owns the awareness stage and any out-of-product sequences that support activation. The strategy works when all three teams are contributing to the same operating model rather than running independent programs that happen to share a user base.

What is the most common reason product adoption strategies fail?

The most common failure mode is not a bad strategy. It is an undocumented one. Teams that build adoption programs without writing down the activation event definition, the ownership model, and the sequencing logic find that the strategy evaporates whenever team composition changes, a product update shifts the activation flow, or a new quarter brings new priorities. The second most common failure mode is a strategy that lives in a document but has no review cadence to keep it current. A strategy that is not revisited quarterly drifts from what the data actually says about user behavior, and teams end up optimizing for an activation event that no longer predicts retention. The fix for both is the same: write it down, share it across teams, and review it on a fixed cadence with the people who have the authority to update it.

How long does it take to build a product adoption strategy?

A functional first version can be produced in four weeks using the one-sprint build path in this article. Week one produces the activation event definition. Week two maps funnel ownership and handoff protocols. Week three builds the intervention sequencing logic. Week four documents the review cadence and compiles the outputs into a shareable one-pager. The constraint is almost never time — it is organizational alignment, specifically getting product, CS, and growth into the same room to agree on a shared activation definition. Teams that have already done that alignment work informally can compress the sprint significantly. Teams starting from scratch should expect the week-one alignment session to take longer than anticipated, and should plan for it rather than treating it as a quick kickoff.