How to Improve Your Customer Onboarding Process in 2026

How to Improve Your Customer Onboarding Process in 2026

How to Improve Your Customer Onboarding Process in 2026

/

9 mins read

TL;DR

Most onboarding improvement efforts fail because teams measure checklist completion instead of activation or tracking whether users finished steps. Product behavior data that signals onboarding stalls rarely flows into CS workflows automatically, which means intervention happens on a calendar schedule rather than when users actually need it. Closing this loop means connecting product events to automated CS plays and in-app guidance without engineering dependency, so teams catch stalls before they become churn.

Most B2B SaaS teams have the same problem. Product usage data that would tell CS exactly which accounts are stalling, which users never reached activation, and which onboarding steps are creating churn risk — that data exists. It lives in your product. And it stays there.

Without a direct connection between product behavior and CS workflows, teams default to calendar-based check-ins, manual health score updates, and reactive intervention that arrives too late. By the time a CSM flags an at-risk account, the user has already mentally checked out.

This is the problem that improving your customer onboarding process actually needs to solve in 2026. Not building a prettier product tour. Closing the gap between what your product knows and what your CS team can act on automatically, without engineering dependency.

This article shows how to make that connection work.

Why most onboarding improvements fail

Three failure modes show up repeatedly across B2B SaaS teams trying to improve their customer onboarding process. They are not design problems. They are measurement and systems problems.

The first is measuring completion instead of activation. A user who clicks through every step of your onboarding checklist and a user who reaches their first moment of real product value are not the same user. Most teams track the former and report it as success. Trial-to-paid conversion stays flat because the metric being optimized has no relationship to the behavior that predicts retention. For a practical framework on defining the right activation events for your product, see how to measure product adoption.

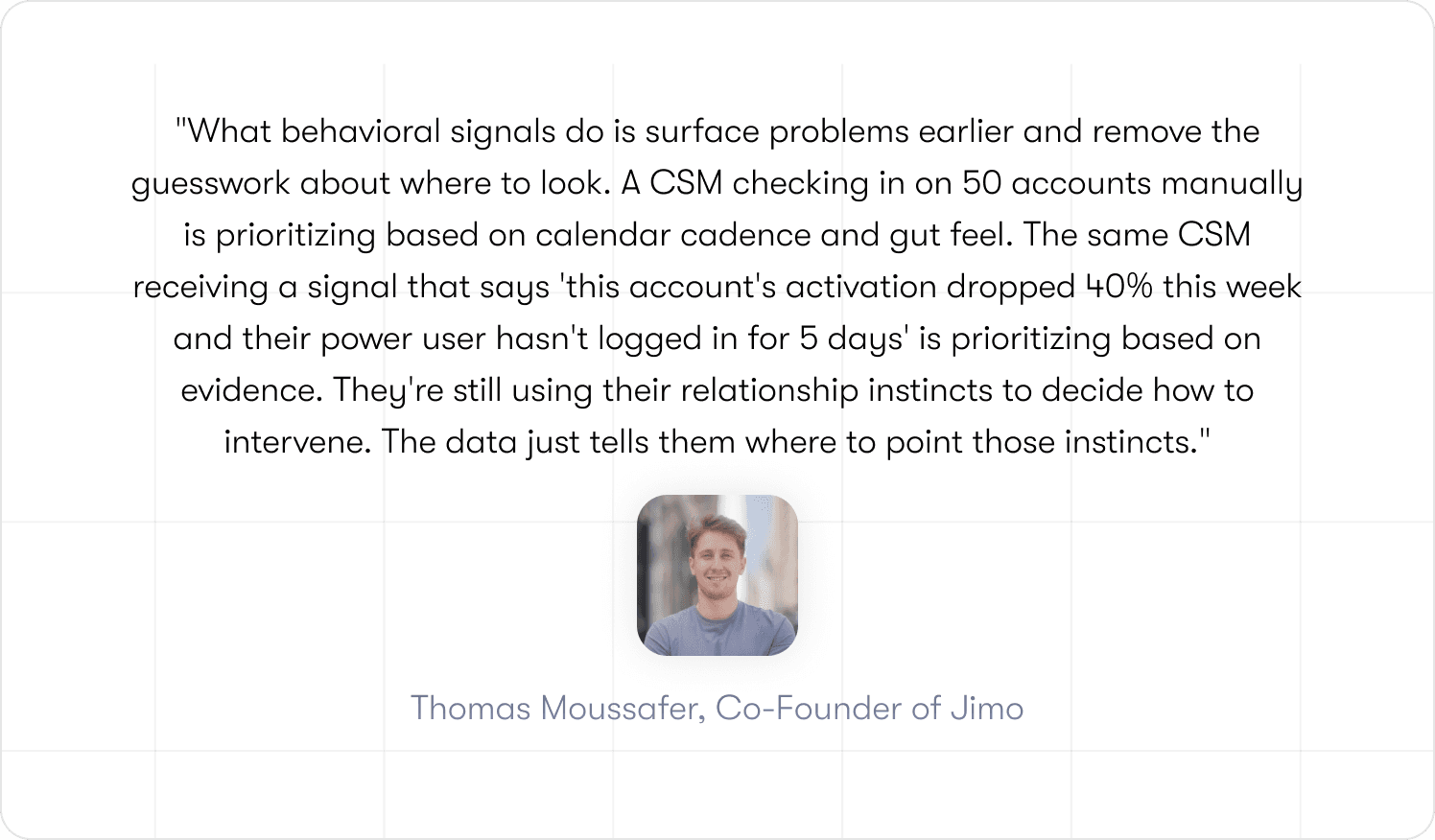

The second failure mode is the data silo. Product usage signals that would tell CS which accounts are stalling sit inside your analytics stack, disconnected from the tools your CS team actually works in. Health scores get updated manually, on a weekly cadence at best. By the time a CSM acts, the time to value window has already closed for most at-risk users. The data existed. It just never triggered anything.

The third is the absence of experimentation discipline. Teams ship onboarding changes based on intuition, measure overall completion rate, and call it done. Without cohort-level visibility into which specific behavioral changes moved retention metrics, there is no way to know what actually worked. Iteration stalls. The same drop-off points recur quarter after quarter because the feedback loop between product behavior and CS workflow automation was never closed.

There is a fourth failure mode that rarely gets named: the behavioral signals exist, the infrastructure gets built, and CS teams still don't act on it.

Most change management approaches frame it as "the system knows better than you" and that kills adoption immediately. CSMs have real relationship context that no behavioral signal captures: they know which champion is about to go on parental leave, which account just went through a reorg, which user is frustrated but still engaged.

The practical fix is small: pick three signals, route them to CSMs as suggestions not mandates, and let the team validate them against their own knowledge for 30 days. The signals that prove accurate earn trust organically. No change management deck required.

Activation vs. completion: defining what actually matters

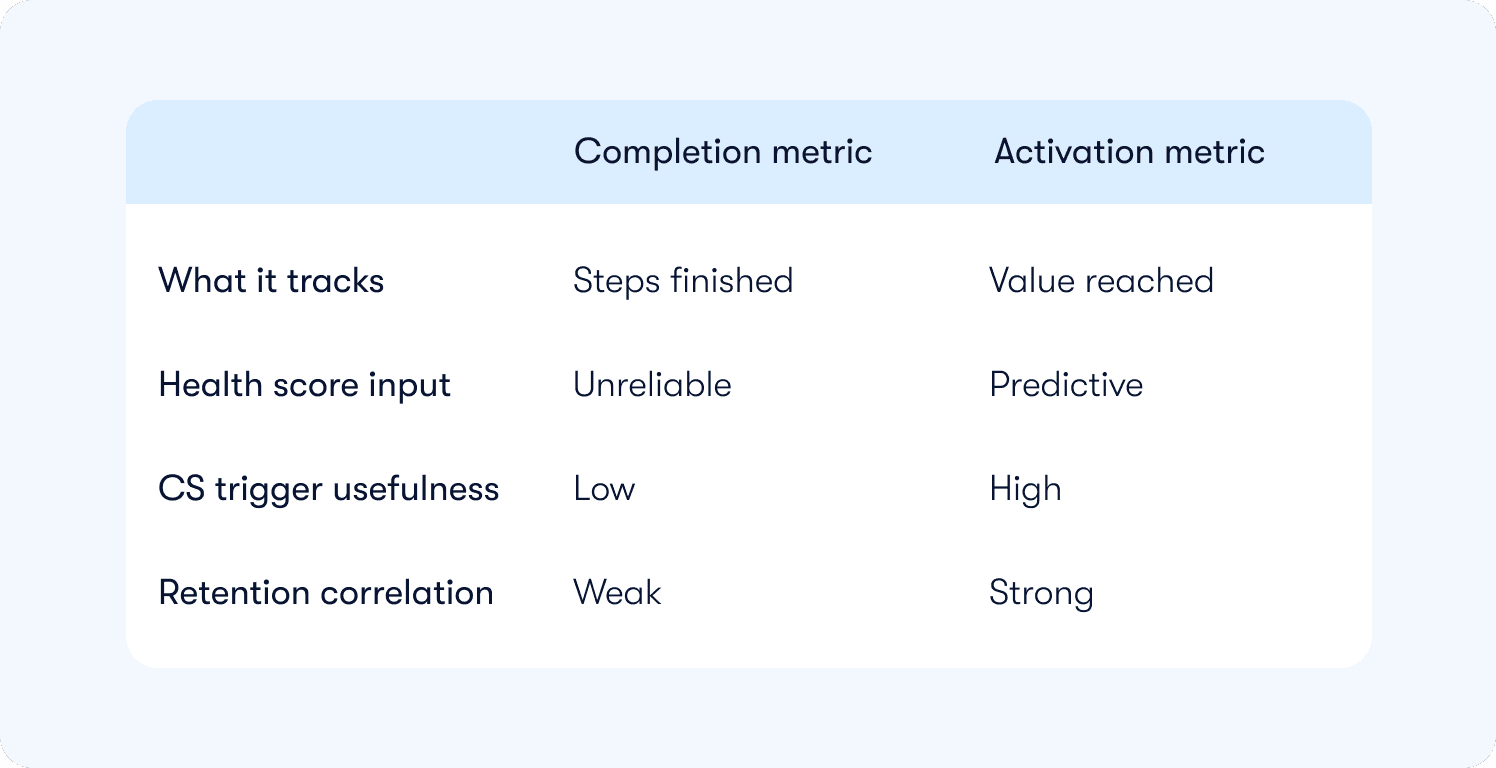

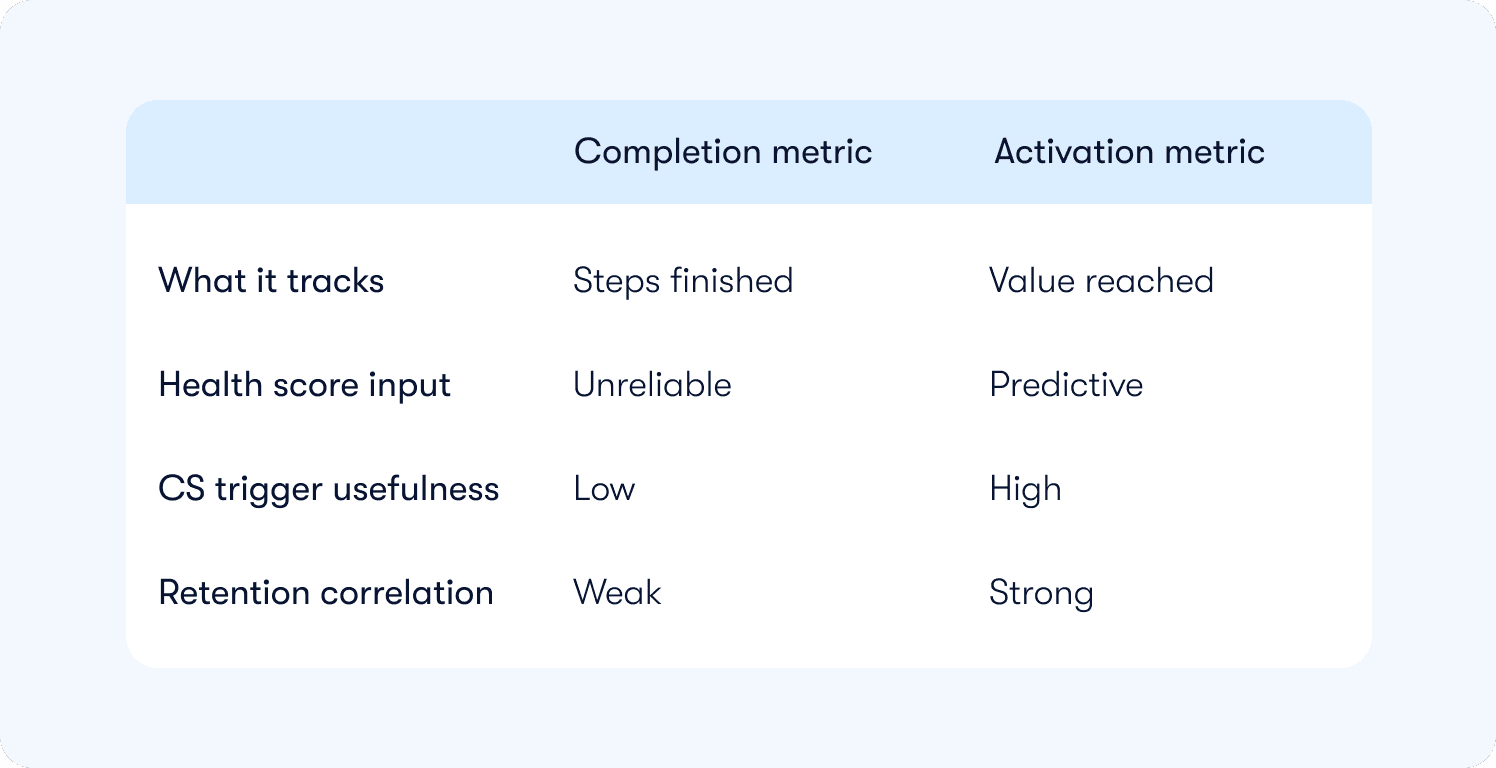

Completion measures output. Activation measures outcome.

A completed onboarding checklist tells you a user followed a sequence of steps. An activation event tells you a user reached the behavior that historically predicts they will pay, stay, and expand. These are different things, and most onboarding instrumentation tracks the wrong one.

The distinction matters for CS teams specifically because health scores built on completion data are lag measures and they reflect what already happened, not what is about to happen. An account that completed onboarding three weeks ago and has not touched the product since will still show green on a completion-based health score. A CS team relying on that score is flying blind.

For the full event taxonomy and instrumentation framework, see how to measure product adoption.

The minimum viable onboarding instrumentation blueprint

Most teams instrument for product analytics. Few instrument for CS action.

The difference is not which events you track. It is what those events are connected to downstream. An activation event sitting in Mixpanel that never updates a health score or triggers a CS task is just data. It does not change how anyone works.

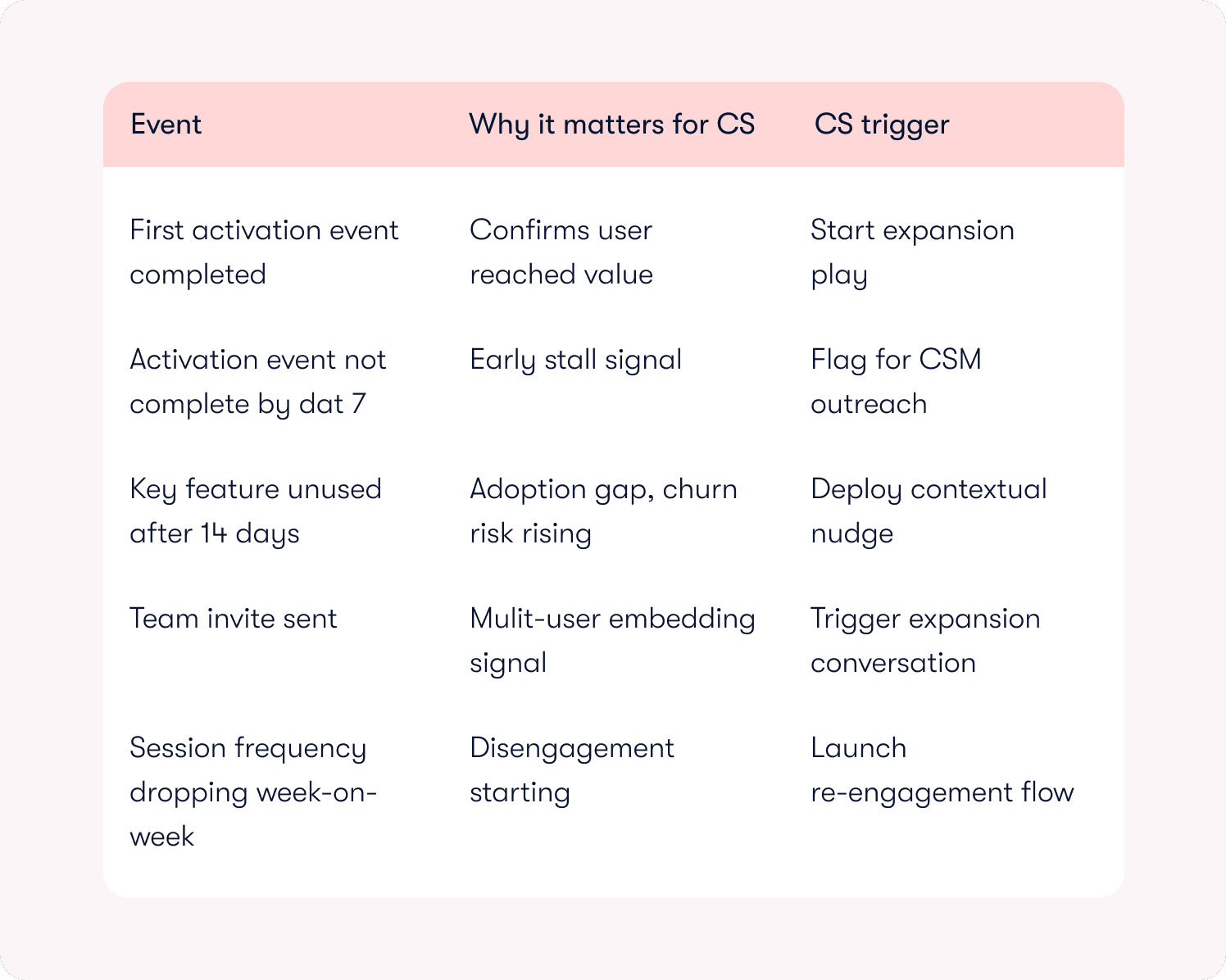

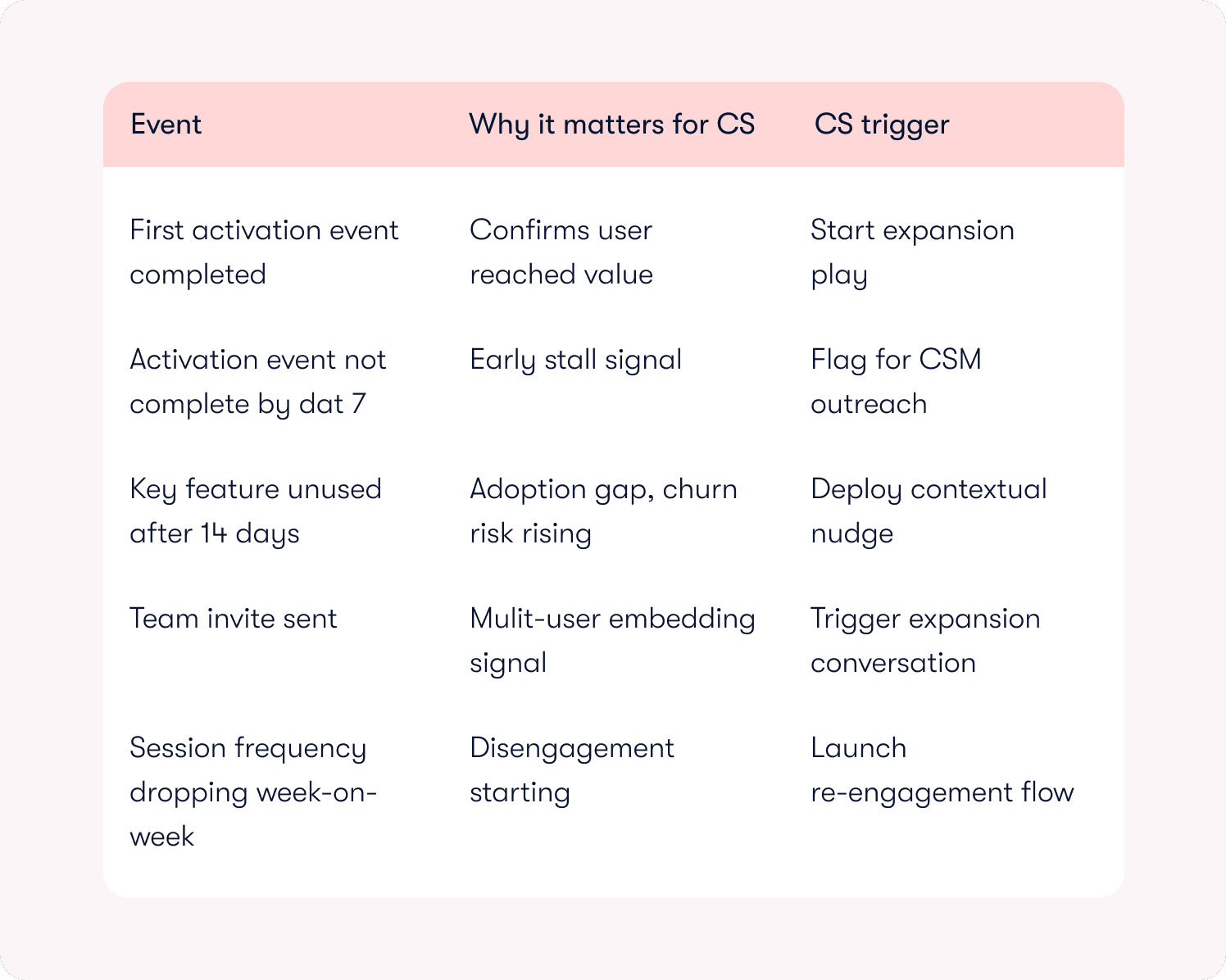

The five events below are the minimum viable set for a mid-market B2B SaaS team that wants product behavior to drive CS plays, not just dashboards.

📖 Fixing onboarding? Here are 19 proven tactics used by SaaS scaleups to drive activation → Get our free playbook

How to operationalize product usage into CS workflows

This is where most teams stop. They have the data. They have the events. They have a health score that technically reflects product behavior. And then a CSM still sends the same check-in email on day 14 regardless of what the product knows.

The gap remains focused on automation.

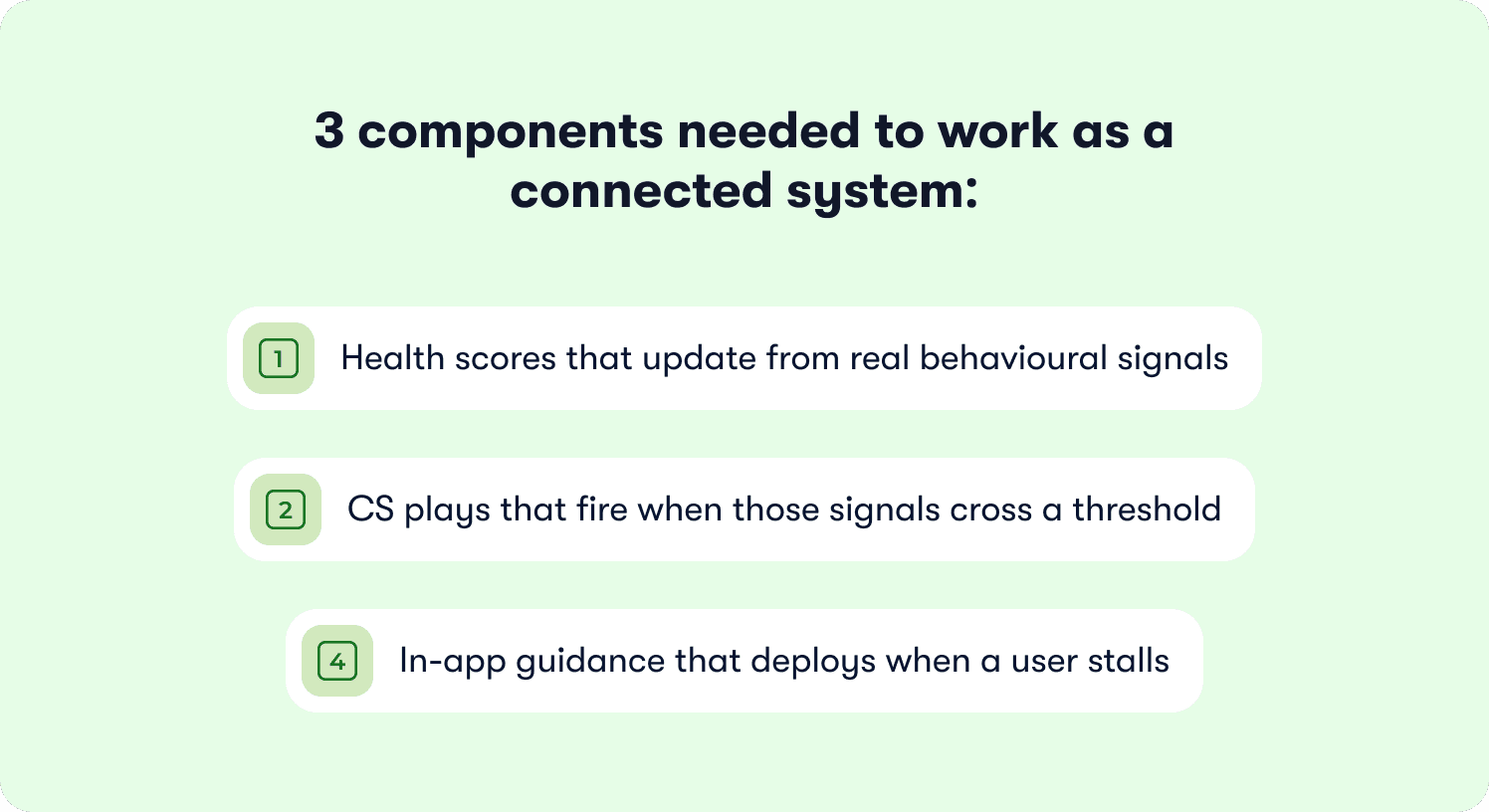

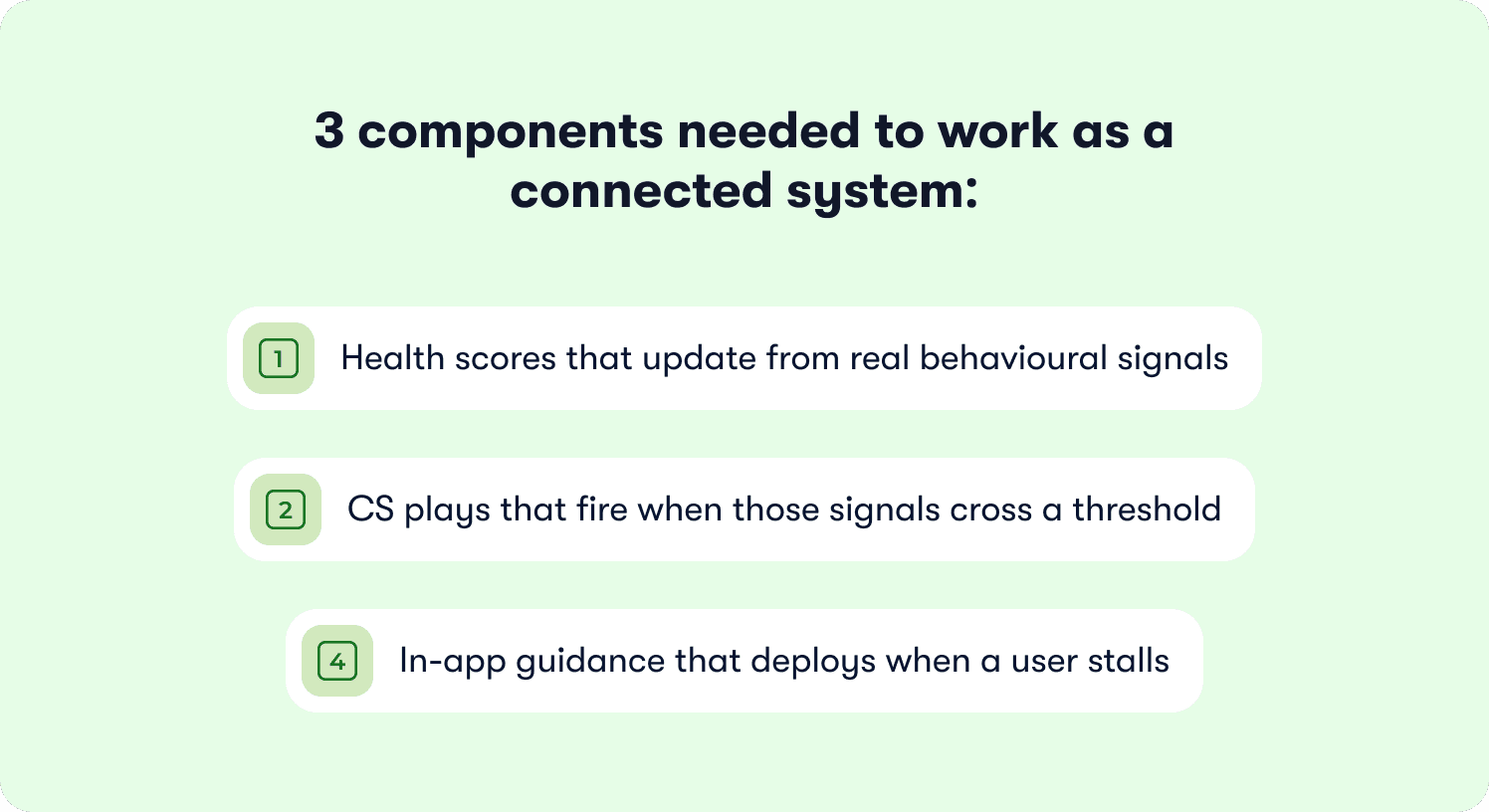

Operationalizing product usage means three things: health scores that update from real behavioral signals using behavior metrics, CS plays that fire when those signals cross a threshold, and in-app guidance that deploys at the exact moment a user stalls — before a ticket gets raised or a CSM notices. These three components need to work as a connected system, not as three separate manual processes.

Health score inputs should map directly to activation depth. Not logins. Not checklist completion. Whether a user has reached the activation event, how many team members are active, which high-value features have been touched in the last 14 days. These signals, pulled automatically via analytics segments, give CS a score that actually predicts risk rather than confirming it after the fact.

Playbook triggers should fire on behavioral thresholds, not dates. A user who hits day 10 without completing the activation event needs a different response than a user who completed it on day 2 and has not logged in since. Calendar-based plays treat them identically. Behavior-based plays do not.

In-app guidance fills the gap between the signal and the CSM. When a user stalls on a specific feature, a contextual nudge deployed at that exact point can resolve the friction before it becomes a support ticket or a churn signal. This is not onboarding. It is the operational layer that sits between your product data and your CS team, doing the work that currently falls through the gap between them.

Jimo connects to your existing stack: Segment, Mixpanel, and CRMs through native integrations, so the events you are already tracking can start driving CS plays without rebuilding your data infrastructure.

A cohort-based experimentation playbook for onboarding

Most onboarding experiments answer the wrong question. Teams test button copy, tour length, and checklist order. They measure completion rate. Nothing moves in retention. The experiment gets filed away and the same drop-off point appears in next quarter's review.

The right question is not "which version of this flow performed better?" It is "which user behaviors, when they change, actually move CS outcomes?"

That reframe changes what you test and what you measure.

Start with a behavioral hypothesis, not a design hypothesis. Not "shorter tours will increase completion" but "users who complete the team invite step within 48 hours of signup retain at 2x the rate of users who do not." That hypothesis connects a specific in-product behavior to a downstream CS outcome. It tells you exactly what to instrument, what to change, and what success looks like.

Structure experiments around cohorts defined by activation depth, not by the version of the flow they saw. Users who reached the activation event versus users who did not. Users who completed the team invite step versus users who skipped it. Users flagged by retention insights as disengaging in week two versus users who stayed active. These cohorts tell you where CS plays need to fire and whether they are working.

Three guardrail metrics keep experimentation honest. Trial-to-paid conversion for the cohort. Day-30 retention by activation milestone reached. CS intervention rate — whether the proportion of accounts requiring manual CSM outreach is going up or down. If an onboarding change does not move at least one of these, it is not an improvement. It is a redesign.

Decision rules:

Ship the change if trial-to-paid conversion improves by 10% or more for the target cohort within 30 days and CS intervention rate holds flat or drops.

Kill the change if completion rate improves but retention and conversion are flat. Completion is not the goal.

Iterate if the signal is directionally positive but below threshold. Isolate the specific step driving the lift and test a more targeted intervention.

Calibrate these thresholds based on your switching cost and maintenance burden. Interventions that require ongoing manual work need higher lift thresholds to justify the operational investment.

Reducing support volume by guiding users before they get stuck

Support tickets are a lagging signal.

By the time a user raises one, friction has already won. They tried, got stuck, and decided asking for help was easier than pushing through. The more dangerous users are the ones who never submit a ticket at all. They just quietly stop showing up.

The teams reducing support volume are not doing it by writing better documentation. They are identifying where users stall before the ticket gets raised and deploying self-service guidance at that exact point.

The operational logic

A user spends three minutes on the integrations page without taking action. That behavioral signal triggers a contextual hint explaining the next step. The user completes the integration. No ticket raised. Health score updated to reflect a completed high-value action rather than a stall.

This works because the intervention happens at the moment of friction, not after it.

Where CS ops comes in

Most teams treat deflection as an onboarding metric. It belongs in your health scoring model.

Every ticket that does not get raised because in-app guidance resolved the friction is a behavioral data point. Aggregate those across an account and a pattern emerges. Accounts where guidance consistently deflects friction are embedding. Accounts where guidance is repeatedly dismissed or ignored are signaling risk.

Ticket volume alone does not show you this distinction. Deflection data connected directly to health scoring does.

This operational logic points toward a larger shift in what onboarding is becoming.

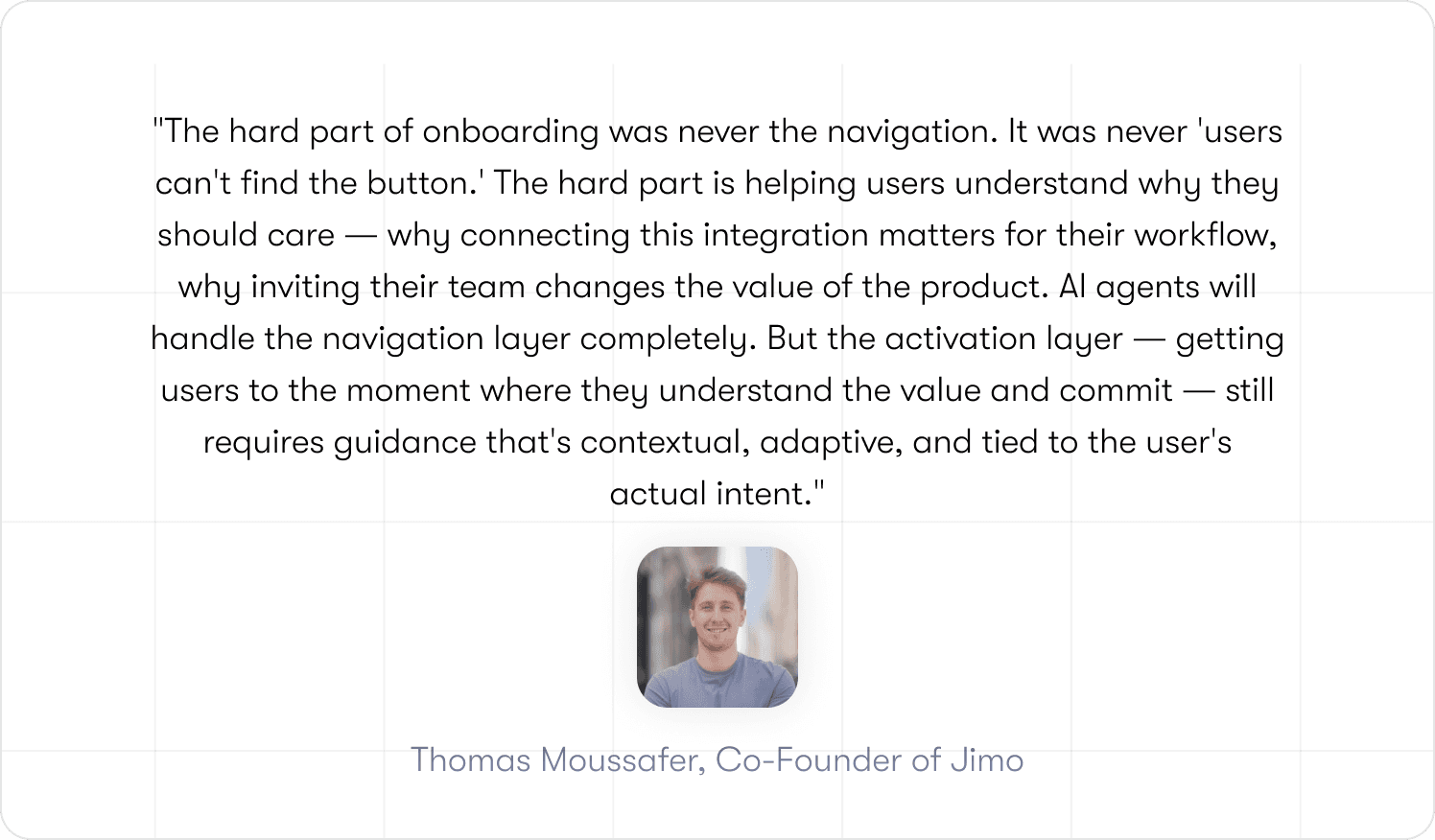

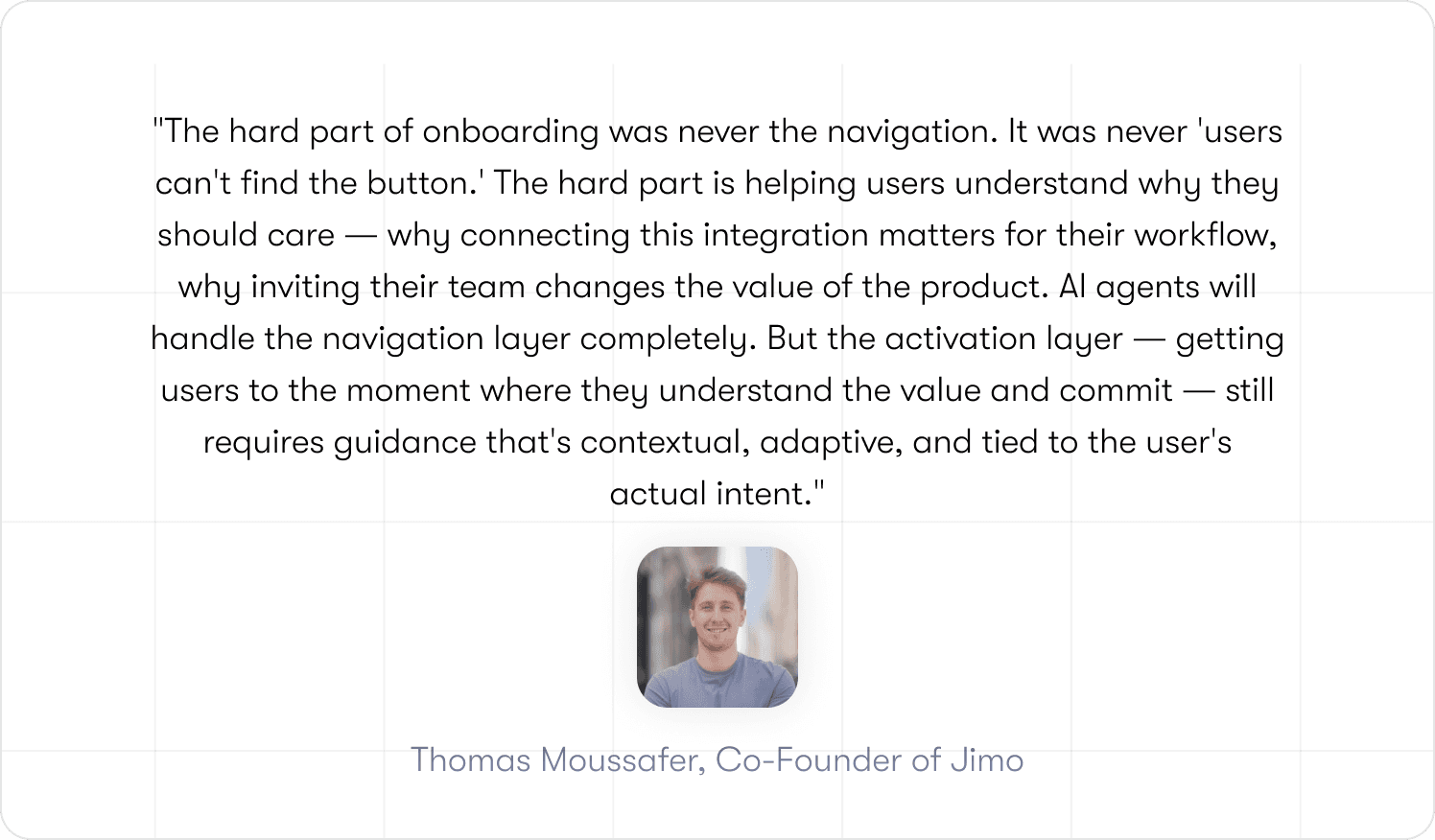

The "click here, then here, then here" model of product tours is already dying. When an AI agent can read your product's interface, understand the user's context, and execute actions on their behalf, there's no reason to walk a human through a 5-step checklist.

What changes is that onboarding shifts from teaching users how to use the product to ensuring they experience why the product matters, faster. The agent handles setup. The adaptive guidance handles the "aha moment." The friction disappears — not because the need for guidance went away, but because the guidance got smarter.

How Jimo closes the loop from insight to intervention

Most adoption tools give you visibility. The drop-off point is visible. The at-risk account is visible. The feature that nobody is using is visible. What they do not give you is the next step. That still falls to a CSM, a manual export, or a developer ticket.

Jimo is built around closing that gap.

The flow works in three connected steps. First, Success Tracker tags activation events and behavioral milestones directly in your product without engineering involvement. No JavaScript. No developer tickets. A PM or CS ops manager clicks the element, defines the goal, and the tracking is live. Second, funnel analysis surfaces exactly where users are dropping off and which accounts are stalling below the activation threshold. Third, in-app experiences deploy automatically at those drop-off points. Tours, hints, checklists, announcements — triggered by the behavioral signal, targeted to the right user segment, without touching the codebase.

The result is a system where the insight and the intervention live in the same platform. A user stalls on day 7. Jimo detects it, deploys a contextual nudge, and flags the account for CS if the nudge does not resolve the friction. The CSM receives a signal driven by product behavior, not a calendar reminder.

For teams already using Segment, Mixpanel, or a CRM, Jimo connects through native integrations so existing event infrastructure feeds directly into the targeting and trigger logic. You are not rebuilding your stack. You are connecting the parts that were never talking to each other.

The full toolkit — tours, hints, checklists, resource center, success tracker — is covered at jimo.ai/tools. For real examples of how CS and product teams have used it, customer stories is the right starting point.

From calendar-based check-ins to behavior-driven plays

The calendar-based CS model made sense when product behavior data was hard to capture and harder to act on. It does not make sense anymore.

Check-ins on day 14 and day 30 are not a retention strategy. They are a scheduling convention. They treat every account the same regardless of what the product knows about each one. An account that activated on day 3 and has three team members active does not need a day-14 check-in. An account that has not completed the activation event by day 7 needed an intervention a week ago.

Product-led growth teams figured this out first. When the product is the primary driver of conversion and retention, CS plays have to be connected to product signals or they are just noise. The same logic applies to any B2B SaaS team running a self-serve or hybrid motion.

Behavior-driven plays are not more work. They are less work on the right accounts at the right time. When the signal comes from product behavior, CSMs stop spending time on healthy accounts that looked quiet and start focusing on the accounts where friction is actually building.

The shift is not complicated to describe. It is hard to execute without the right infrastructure connecting product data to CS workflows automatically. That infrastructure is what this article has been building toward.

But infrastructure is only half the problem. The other half is getting CS teams to actually trust it.

The teams that get this right in 2026 will not be the ones with the best onboarding design. They will be the ones who closed the loop between what their product knows and what their CS team does about it.

Ready to see how it works in practice? See Jimo in action and see how teams are catching onboarding stalls before they become churn.

FAQs

How can the customer onboarding process be improved?

The highest-leverage change most teams can make is connecting product behavior to CS action automatically. That means defining the activation event that predicts retention, instrumenting it without engineering dependency, and triggering CS plays when users do not reach it within a defined window. Better design helps. Automation is what scales it.

How do you operationalize product usage data for CS teams?

Start with five behavioral events that map to CS outcomes: activation event completed, activation event not completed by day 7, key feature unused after 14 days, team invite sent, and session frequency dropping week-on-week. Connect those events to health score inputs and playbook triggers. The events you are already tracking in your analytics stack can drive CS plays without rebuilding your data infrastructure if the right integration layer is in place.

What is the difference between onboarding completion and activation?

Completion measures whether a user finished a sequence of steps. Activation measures whether a user reached the behavior that predicts they will retain and pay. Health scores built on completion data are lag measures. They confirm what already happened rather than signaling what is about to happen. For a full breakdown, see how to measure product adoption.

How do integration platforms improve customer onboarding?

Integration platforms close the gap between where product behavior data lives and where CS teams work. Without integration, usage signals stay inside your analytics stack and health scores get updated manually. With integration, behavioral thresholds automatically update health scores, trigger CS tasks, and deploy in-app guidance without manual intervention or engineering sprints.

What are the best AI solutions for improving customer onboarding?

The most effective AI solutions for onboarding connect behavioral data to automated interventions in real time. The category to evaluate is AI-powered digital adoption platforms, which combine no-code event tracking, behavior-triggered in-app guidance, and CS workflow automation in a single system. The key differentiator to look for is whether the platform closes the full loop from insight to intervention or whether it stops at visibility and leaves the action step to a manual process.

TL;DR

Most onboarding improvement efforts fail because teams measure checklist completion instead of activation or tracking whether users finished steps. Product behavior data that signals onboarding stalls rarely flows into CS workflows automatically, which means intervention happens on a calendar schedule rather than when users actually need it. Closing this loop means connecting product events to automated CS plays and in-app guidance without engineering dependency, so teams catch stalls before they become churn.

Most B2B SaaS teams have the same problem. Product usage data that would tell CS exactly which accounts are stalling, which users never reached activation, and which onboarding steps are creating churn risk — that data exists. It lives in your product. And it stays there.

Without a direct connection between product behavior and CS workflows, teams default to calendar-based check-ins, manual health score updates, and reactive intervention that arrives too late. By the time a CSM flags an at-risk account, the user has already mentally checked out.

This is the problem that improving your customer onboarding process actually needs to solve in 2026. Not building a prettier product tour. Closing the gap between what your product knows and what your CS team can act on automatically, without engineering dependency.

This article shows how to make that connection work.

Why most onboarding improvements fail

Three failure modes show up repeatedly across B2B SaaS teams trying to improve their customer onboarding process. They are not design problems. They are measurement and systems problems.

The first is measuring completion instead of activation. A user who clicks through every step of your onboarding checklist and a user who reaches their first moment of real product value are not the same user. Most teams track the former and report it as success. Trial-to-paid conversion stays flat because the metric being optimized has no relationship to the behavior that predicts retention. For a practical framework on defining the right activation events for your product, see how to measure product adoption.

The second failure mode is the data silo. Product usage signals that would tell CS which accounts are stalling sit inside your analytics stack, disconnected from the tools your CS team actually works in. Health scores get updated manually, on a weekly cadence at best. By the time a CSM acts, the time to value window has already closed for most at-risk users. The data existed. It just never triggered anything.

The third is the absence of experimentation discipline. Teams ship onboarding changes based on intuition, measure overall completion rate, and call it done. Without cohort-level visibility into which specific behavioral changes moved retention metrics, there is no way to know what actually worked. Iteration stalls. The same drop-off points recur quarter after quarter because the feedback loop between product behavior and CS workflow automation was never closed.

There is a fourth failure mode that rarely gets named: the behavioral signals exist, the infrastructure gets built, and CS teams still don't act on it.

Most change management approaches frame it as "the system knows better than you" and that kills adoption immediately. CSMs have real relationship context that no behavioral signal captures: they know which champion is about to go on parental leave, which account just went through a reorg, which user is frustrated but still engaged.

The practical fix is small: pick three signals, route them to CSMs as suggestions not mandates, and let the team validate them against their own knowledge for 30 days. The signals that prove accurate earn trust organically. No change management deck required.

Activation vs. completion: defining what actually matters

Completion measures output. Activation measures outcome.

A completed onboarding checklist tells you a user followed a sequence of steps. An activation event tells you a user reached the behavior that historically predicts they will pay, stay, and expand. These are different things, and most onboarding instrumentation tracks the wrong one.

The distinction matters for CS teams specifically because health scores built on completion data are lag measures and they reflect what already happened, not what is about to happen. An account that completed onboarding three weeks ago and has not touched the product since will still show green on a completion-based health score. A CS team relying on that score is flying blind.

For the full event taxonomy and instrumentation framework, see how to measure product adoption.

The minimum viable onboarding instrumentation blueprint

Most teams instrument for product analytics. Few instrument for CS action.

The difference is not which events you track. It is what those events are connected to downstream. An activation event sitting in Mixpanel that never updates a health score or triggers a CS task is just data. It does not change how anyone works.

The five events below are the minimum viable set for a mid-market B2B SaaS team that wants product behavior to drive CS plays, not just dashboards.

📖 Fixing onboarding? Here are 19 proven tactics used by SaaS scaleups to drive activation → Get our free playbook

How to operationalize product usage into CS workflows

This is where most teams stop. They have the data. They have the events. They have a health score that technically reflects product behavior. And then a CSM still sends the same check-in email on day 14 regardless of what the product knows.

The gap remains focused on automation.

Operationalizing product usage means three things: health scores that update from real behavioral signals using behavior metrics, CS plays that fire when those signals cross a threshold, and in-app guidance that deploys at the exact moment a user stalls — before a ticket gets raised or a CSM notices. These three components need to work as a connected system, not as three separate manual processes.

Health score inputs should map directly to activation depth. Not logins. Not checklist completion. Whether a user has reached the activation event, how many team members are active, which high-value features have been touched in the last 14 days. These signals, pulled automatically via analytics segments, give CS a score that actually predicts risk rather than confirming it after the fact.

Playbook triggers should fire on behavioral thresholds, not dates. A user who hits day 10 without completing the activation event needs a different response than a user who completed it on day 2 and has not logged in since. Calendar-based plays treat them identically. Behavior-based plays do not.

In-app guidance fills the gap between the signal and the CSM. When a user stalls on a specific feature, a contextual nudge deployed at that exact point can resolve the friction before it becomes a support ticket or a churn signal. This is not onboarding. It is the operational layer that sits between your product data and your CS team, doing the work that currently falls through the gap between them.

Jimo connects to your existing stack: Segment, Mixpanel, and CRMs through native integrations, so the events you are already tracking can start driving CS plays without rebuilding your data infrastructure.

A cohort-based experimentation playbook for onboarding

Most onboarding experiments answer the wrong question. Teams test button copy, tour length, and checklist order. They measure completion rate. Nothing moves in retention. The experiment gets filed away and the same drop-off point appears in next quarter's review.

The right question is not "which version of this flow performed better?" It is "which user behaviors, when they change, actually move CS outcomes?"

That reframe changes what you test and what you measure.

Start with a behavioral hypothesis, not a design hypothesis. Not "shorter tours will increase completion" but "users who complete the team invite step within 48 hours of signup retain at 2x the rate of users who do not." That hypothesis connects a specific in-product behavior to a downstream CS outcome. It tells you exactly what to instrument, what to change, and what success looks like.

Structure experiments around cohorts defined by activation depth, not by the version of the flow they saw. Users who reached the activation event versus users who did not. Users who completed the team invite step versus users who skipped it. Users flagged by retention insights as disengaging in week two versus users who stayed active. These cohorts tell you where CS plays need to fire and whether they are working.

Three guardrail metrics keep experimentation honest. Trial-to-paid conversion for the cohort. Day-30 retention by activation milestone reached. CS intervention rate — whether the proportion of accounts requiring manual CSM outreach is going up or down. If an onboarding change does not move at least one of these, it is not an improvement. It is a redesign.

Decision rules:

Ship the change if trial-to-paid conversion improves by 10% or more for the target cohort within 30 days and CS intervention rate holds flat or drops.

Kill the change if completion rate improves but retention and conversion are flat. Completion is not the goal.

Iterate if the signal is directionally positive but below threshold. Isolate the specific step driving the lift and test a more targeted intervention.

Calibrate these thresholds based on your switching cost and maintenance burden. Interventions that require ongoing manual work need higher lift thresholds to justify the operational investment.

Reducing support volume by guiding users before they get stuck

Support tickets are a lagging signal.

By the time a user raises one, friction has already won. They tried, got stuck, and decided asking for help was easier than pushing through. The more dangerous users are the ones who never submit a ticket at all. They just quietly stop showing up.

The teams reducing support volume are not doing it by writing better documentation. They are identifying where users stall before the ticket gets raised and deploying self-service guidance at that exact point.

The operational logic

A user spends three minutes on the integrations page without taking action. That behavioral signal triggers a contextual hint explaining the next step. The user completes the integration. No ticket raised. Health score updated to reflect a completed high-value action rather than a stall.

This works because the intervention happens at the moment of friction, not after it.

Where CS ops comes in

Most teams treat deflection as an onboarding metric. It belongs in your health scoring model.

Every ticket that does not get raised because in-app guidance resolved the friction is a behavioral data point. Aggregate those across an account and a pattern emerges. Accounts where guidance consistently deflects friction are embedding. Accounts where guidance is repeatedly dismissed or ignored are signaling risk.

Ticket volume alone does not show you this distinction. Deflection data connected directly to health scoring does.

This operational logic points toward a larger shift in what onboarding is becoming.

The "click here, then here, then here" model of product tours is already dying. When an AI agent can read your product's interface, understand the user's context, and execute actions on their behalf, there's no reason to walk a human through a 5-step checklist.

What changes is that onboarding shifts from teaching users how to use the product to ensuring they experience why the product matters, faster. The agent handles setup. The adaptive guidance handles the "aha moment." The friction disappears — not because the need for guidance went away, but because the guidance got smarter.

How Jimo closes the loop from insight to intervention

Most adoption tools give you visibility. The drop-off point is visible. The at-risk account is visible. The feature that nobody is using is visible. What they do not give you is the next step. That still falls to a CSM, a manual export, or a developer ticket.

Jimo is built around closing that gap.

The flow works in three connected steps. First, Success Tracker tags activation events and behavioral milestones directly in your product without engineering involvement. No JavaScript. No developer tickets. A PM or CS ops manager clicks the element, defines the goal, and the tracking is live. Second, funnel analysis surfaces exactly where users are dropping off and which accounts are stalling below the activation threshold. Third, in-app experiences deploy automatically at those drop-off points. Tours, hints, checklists, announcements — triggered by the behavioral signal, targeted to the right user segment, without touching the codebase.

The result is a system where the insight and the intervention live in the same platform. A user stalls on day 7. Jimo detects it, deploys a contextual nudge, and flags the account for CS if the nudge does not resolve the friction. The CSM receives a signal driven by product behavior, not a calendar reminder.

For teams already using Segment, Mixpanel, or a CRM, Jimo connects through native integrations so existing event infrastructure feeds directly into the targeting and trigger logic. You are not rebuilding your stack. You are connecting the parts that were never talking to each other.

The full toolkit — tours, hints, checklists, resource center, success tracker — is covered at jimo.ai/tools. For real examples of how CS and product teams have used it, customer stories is the right starting point.

From calendar-based check-ins to behavior-driven plays

The calendar-based CS model made sense when product behavior data was hard to capture and harder to act on. It does not make sense anymore.

Check-ins on day 14 and day 30 are not a retention strategy. They are a scheduling convention. They treat every account the same regardless of what the product knows about each one. An account that activated on day 3 and has three team members active does not need a day-14 check-in. An account that has not completed the activation event by day 7 needed an intervention a week ago.

Product-led growth teams figured this out first. When the product is the primary driver of conversion and retention, CS plays have to be connected to product signals or they are just noise. The same logic applies to any B2B SaaS team running a self-serve or hybrid motion.

Behavior-driven plays are not more work. They are less work on the right accounts at the right time. When the signal comes from product behavior, CSMs stop spending time on healthy accounts that looked quiet and start focusing on the accounts where friction is actually building.

The shift is not complicated to describe. It is hard to execute without the right infrastructure connecting product data to CS workflows automatically. That infrastructure is what this article has been building toward.

But infrastructure is only half the problem. The other half is getting CS teams to actually trust it.

The teams that get this right in 2026 will not be the ones with the best onboarding design. They will be the ones who closed the loop between what their product knows and what their CS team does about it.

Ready to see how it works in practice? See Jimo in action and see how teams are catching onboarding stalls before they become churn.

FAQs

How can the customer onboarding process be improved?

The highest-leverage change most teams can make is connecting product behavior to CS action automatically. That means defining the activation event that predicts retention, instrumenting it without engineering dependency, and triggering CS plays when users do not reach it within a defined window. Better design helps. Automation is what scales it.

How do you operationalize product usage data for CS teams?

Start with five behavioral events that map to CS outcomes: activation event completed, activation event not completed by day 7, key feature unused after 14 days, team invite sent, and session frequency dropping week-on-week. Connect those events to health score inputs and playbook triggers. The events you are already tracking in your analytics stack can drive CS plays without rebuilding your data infrastructure if the right integration layer is in place.

What is the difference between onboarding completion and activation?

Completion measures whether a user finished a sequence of steps. Activation measures whether a user reached the behavior that predicts they will retain and pay. Health scores built on completion data are lag measures. They confirm what already happened rather than signaling what is about to happen. For a full breakdown, see how to measure product adoption.

How do integration platforms improve customer onboarding?

Integration platforms close the gap between where product behavior data lives and where CS teams work. Without integration, usage signals stay inside your analytics stack and health scores get updated manually. With integration, behavioral thresholds automatically update health scores, trigger CS tasks, and deploy in-app guidance without manual intervention or engineering sprints.

What are the best AI solutions for improving customer onboarding?

The most effective AI solutions for onboarding connect behavioral data to automated interventions in real time. The category to evaluate is AI-powered digital adoption platforms, which combine no-code event tracking, behavior-triggered in-app guidance, and CS workflow automation in a single system. The key differentiator to look for is whether the platform closes the full loop from insight to intervention or whether it stops at visibility and leaves the action step to a manual process.

Level-up your onboarding in 30 mins

Discover how you can transform your product with experts from Jimo in 30 mins

Level-up your onboarding in 30 mins

Discover how you can transform your product with experts from Jimo in 30 mins

Level-up your onboarding in 30 mins

Discover how you can transform your product with experts from Jimo in 30 mins

Level-up your onboarding in 30 mins

Discover how you can transform your product with experts from Jimo in 30 mins

Keep Reading

Onboarding

7 Best Practices for Onboarding New Users to Fulfillment Software (That Generic SaaS Guides Don't Cover)

Fahmi Dani

Product Designer @Jimo

Onboarding

5 Best Appcues Alternatives for User Onboarding in 2026

Fahmi Dani

Product Designer @Jimo

Onboarding

Onboarding and Training Best Practices for Cybersecurity SaaS in 2026

Thomas Moussafer

Co-Founder @Jimo

Onboarding

7 Best Practices for Onboarding New Users to Fulfillment Software (That Generic SaaS Guides Don't Cover)

Fahmi Dani

Product Designer @Jimo

Onboarding

5 Best Appcues Alternatives for User Onboarding in 2026

Fahmi Dani

Product Designer @Jimo

Onboarding

Onboarding and Training Best Practices for Cybersecurity SaaS in 2026

Thomas Moussafer

Co-Founder @Jimo

Onboarding

12 Best Personalized Onboarding Software for 2026

Fahmi Dani

Product Designer @Jimo