TL;DR

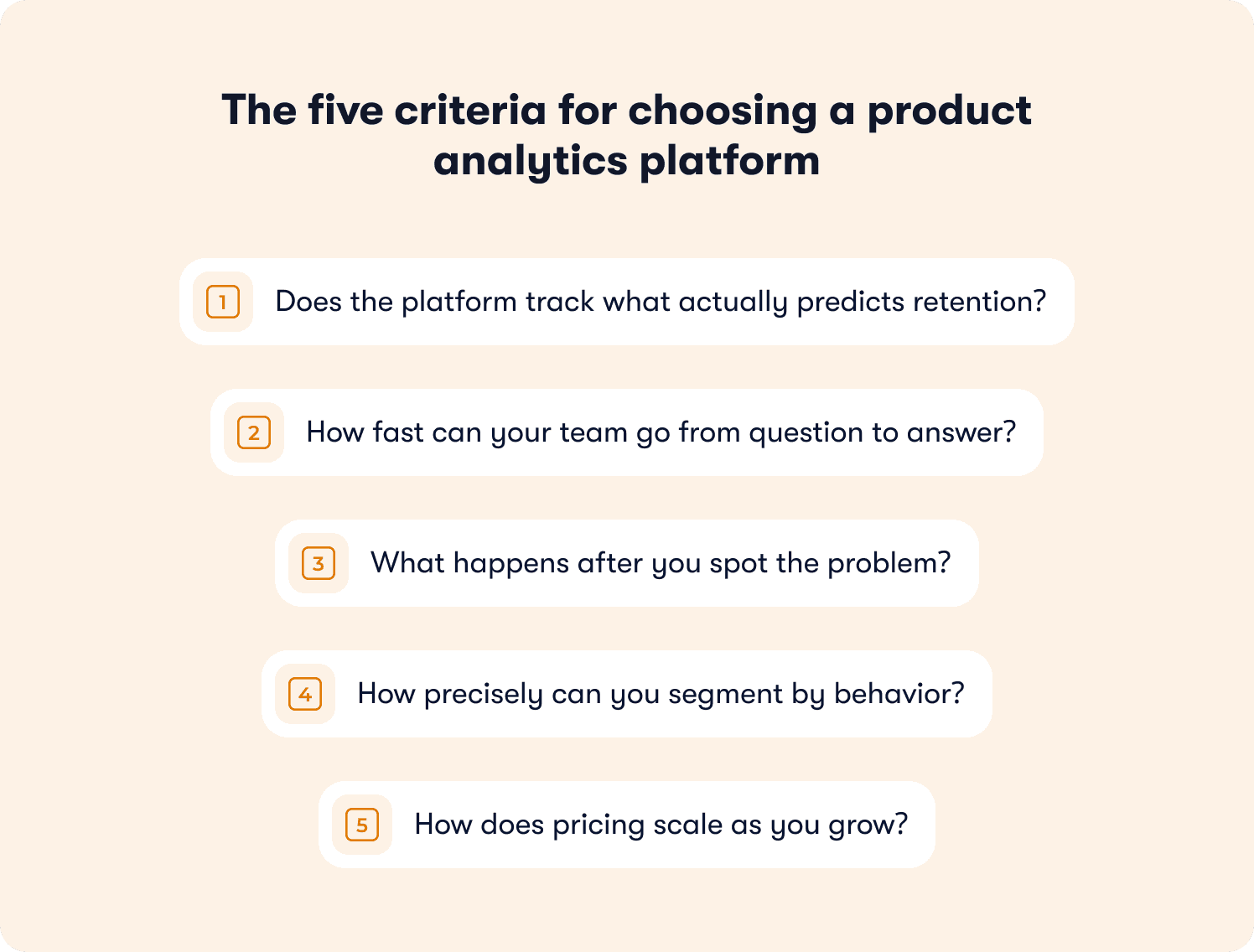

The average SaaS activation rate sits at 36%, and most analytics platforms will tell you exactly where users drop off without giving you any way to act on it. This article lays out a five-criteria framework for evaluating product analytics platforms at the VP level: whether the platform tracks the behaviors that actually predict retention, how fast non-technical teams can go from question to answer, whether the tool closes the gap between spotting a problem and fixing it, how precisely you can segment by behavior rather than by plan or role, and how pricing scales as your user base grows. It also maps the four platform archetypes on the market, the questions most evaluation processes miss, and a plain-language decision matrix to bring to your next vendor call.

Your acquisition numbers look fine. Signups are up. The growth slide still gets a nod in the board deck. But trial-to-paid conversion hasn't moved in two quarters, and you're running out of ways to explain it.

The instinct is to look harder at the data. Add another funnel. Build a new cohort. Upgrade the analytics platform. But more visibility into the same problem doesn't fix the problem — and most analytics platforms are built to give you exactly that: a clearer view of where users drop off, with no mechanism to do anything about it from within the same system.

That structural limitation is what this piece is about. Not which analytics tool has the best cohort analysis, but how to evaluate whether the platform you choose can actually close the loop between what you see and what your team can ship.

What follows is a five-criteria framework for making that call, a breakdown of the four platform archetypes and their real tradeoffs, and a decision matrix worth bringing to your next vendor conversation.

Start with the business problem, not the tool category

The median SaaS activation rate is 30%. The average is 36%. That data comes from the largest benchmarking study of its kind — over 500 products surveyed by Lenny Rachitsky and Yuriy Timen. You probably already know where your number sits. And if it is not moving, you also know what that conversation looks like in the board meeting.

Signups are up. The acquisition slide looks fine. But trial-to-paid conversion has been flat for two quarters, and the explanation you gave last time — "we're working on activation" — is wearing thin. You have funnels. You have cohorts. You have a dashboard that shows exactly where users drop off. What you do not have is a clean answer for why fixing it takes six weeks and three engineering tickets every single time.

This is a loop problem. The data tells you what is broken. Your current setup has no fast path to fixing it.

Here is what the stakes actually look like. A 25% improvement in activation rate correlates with a 34% increase in revenue (FairMarkit). That relationship is not incidental; it is the mechanism.

Activation is the moment a user first reaches the behavior that predicts they will stay and pay. Not when they complete a tour. Not when they log in a second time. When they do the thing that separates your retained customers from your churned ones. For Slack it was 2,000 messages sent. For Dropbox it was uploading the first file. For your product, that threshold is identifiable, measurable, and almost certainly under-optimized.

The platform decision you are making is a revenue decision. The right question is not which tool has the deepest funnel analysis. It is which tool gets your team from behavioral signal to deployed fix in the shortest possible time — and whether the same system handles both sides of that loop.

That is the frame for everything that follows.

The five criteria that actually matter for choosing a product analytics platform

Most analytics platform evaluations start with a feature matrix. Event tracking, funnel analysis, cohort depth, session replay, integrations. Those capabilities matter, but they are table stakes. Every serious platform covers them. Evaluating on features alone is how you end up with a technically impressive tool that still does not move your activation number — and still has you explaining flat conversion to the same board in Q3.

The five criteria below are different. Each one maps to a decision you will need to make in the next 90 days.

1. Does the platform track what actually predicts retention?

Your current analytics tool probably tracks a lot. The question is whether it tracks the right things — the specific behaviors that correlate with trial-to-paid conversion and 90-day retention. There is a meaningful difference between measuring what users click and measuring what users do that predicts whether they will still be paying customers next quarter.

Can your team build a cohort of users who completed a specific in-product behavior in their first week and compare their 30-day retention against users who did not — without involving a data analyst or writing SQL? If not, the insight arrives too slowly to act on. Before any vendor demo, it is worth getting clear on which product adoption metrics actually predict retention in your product, because most platforms will show you engagement data and call it adoption.

2. How fast can your team go from question to answer?

You should be able to answer "where are users dropping off in onboarding?" on a Tuesday morning without filing a data request. If getting to that answer requires SQL, a dedicated analyst, or a three-day setup cycle, the insight is stale before it reaches the team — and you are back to making roadmap decisions on instinct dressed up as judgment.

This is not a minor usability concern. It determines the cadence at which your team can actually iterate. The platform that returns answers in hours compounds over quarters. The one that returns answers in days does not.

3. What happens after you spot the problem?

This is the criterion most evaluation processes miss. And it is the one that will define whether this platform purchase changes anything.

You have spotted the drop-off before. You flagged it. You wrote the brief. It went into the backlog. Engineering picked it up five weeks later, shipped something, and you waited another two weeks to measure. By the time the loop closed, the cohort that exposed the problem had already churned or converted without your help. You got a data point. You did not get a fix.

The question to ask every vendor is whether their platform closes that gap, whether seeing the problem and acting on it happen in the same system, or whether they hand you an insight and leave the execution to a different tool and a different team. Most pure analytics platforms do the former. A smaller category combines behavioral insight with in-product execution, so your PM can go from identifying a drop-off at step three of onboarding to deploying a contextual walkthrough to that exact user segment — without touching the codebase, without a sprint. How to increase product adoption without an engineering dependency is a specific capability. It is not a given, and it is not evenly distributed across the market.

Ask vendors: When I identify a drop-off in my onboarding flow, how do I deploy a fix to that user segment? Show me the end-to-end workflow and tell me whether engineering is involved at any point.

4. How precisely can you segment by behavior?

Plan-based segmentation gets you started. Role-based segmentation gets you closer. But neither tells you what to do about the user who completed step two of onboarding, visited the integration page twice, and then went quiet — which is the user who is about to churn and still saveable.

Behavioral segmentation is what separates a relevant intervention from a generic nudge. The ability to target users based on specific sequences of in-product actions and not just what plan they are on or what role they selected at signup, is what makes the difference between a message that lands at the right moment and one that gets dismissed as noise. Your analytics segments capability determines whether you can operate at that precision or whether you are approximating.

Ask vendors: Can I build a segment of users who completed a specific sequence of in-product actions but did not reach a defined milestone? Show me how that targeting works in a real account.

5. How does pricing scale as you grow?

This one is almost always underestimated at signing and regretted at renewal.

MAU-based pricing penalizes PLG growth directly — every new user you acquire costs you more on the analytics bill before they have converted to a single dollar of revenue. Event-based pricing rewards lean instrumentation but can spike unpredictably when a new feature ships with heavy tracking. Feature-gated enterprise tiers look affordable in the demo and reveal their real cost in the implementation statement of work.

Before you sign anything, model your expected user trajectory at 12 and 24 months. Ask what happens to your bill and to your feature access at three times current MAU. At Series B scale, the wrong pricing structure can represent $100,000 or more in unnecessary annual spend and an investment that would do more work deployed against the time-to-value problem you are actually trying to solve.

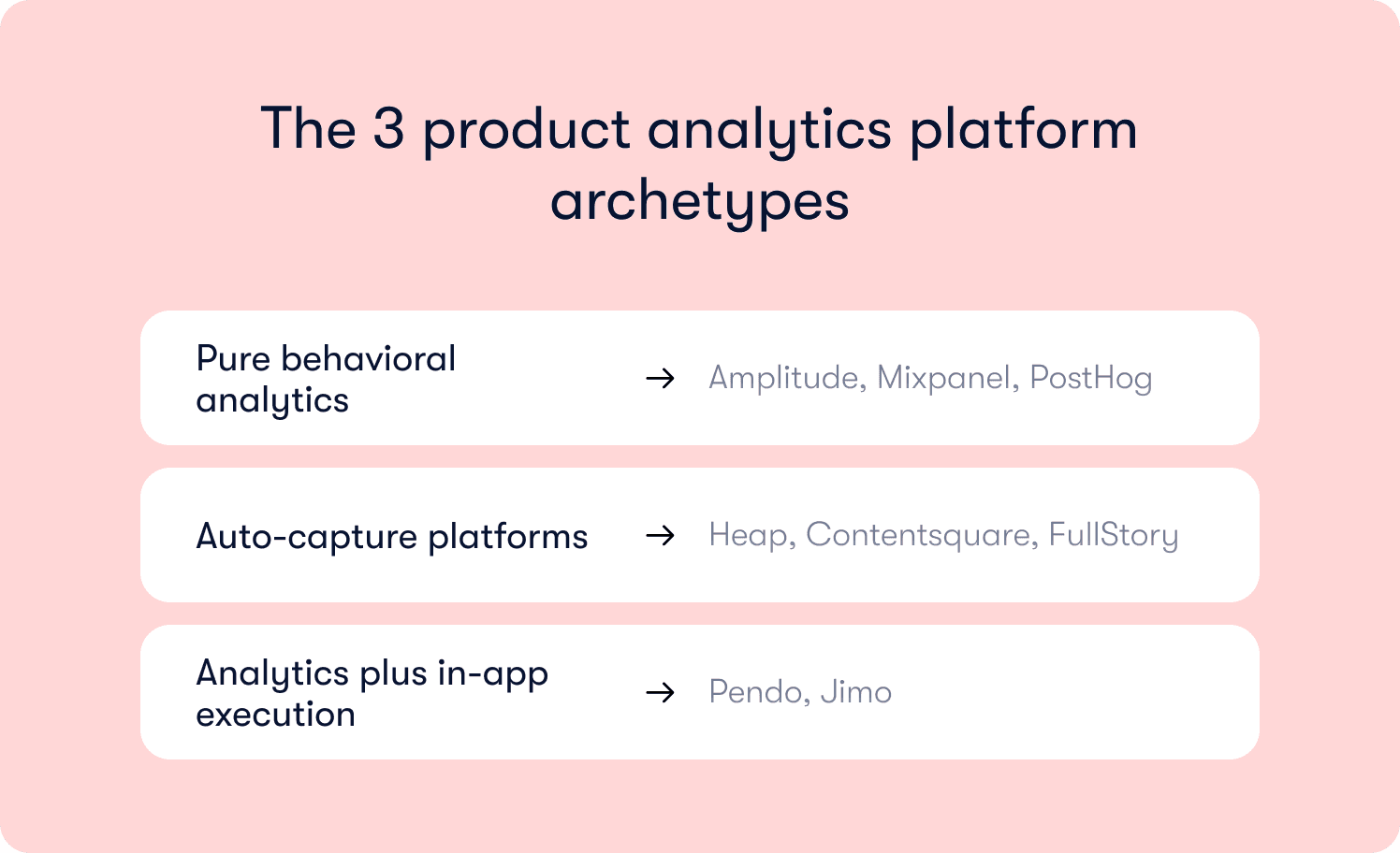

The three product analytics platform archetypes and what each is actually built for

The market will present you with somewhere between 10 and 20 credible options. Most of them will demo well. The dashboards will look clean, the integrations slide will cover your stack, and the AE will have a case study from a company your size. What the demo will not show you is the tradeoff that becomes obvious three months after you sign.

There are four archetypes worth understanding. Knowing which one you are looking at before the demo ends can save you a quarter of wasted evaluation cycles.

Pure behavioral analytics: Amplitude, Mixpanel, PostHog

These are the platforms most product teams reach for first, and for good reason. Cohort analysis, funnel tracking, retention curves, behavioral segmentation — the analytical depth is genuine. If your team has a dedicated data analyst, a clean event taxonomy, and an engineering backlog that is not the bottleneck on iteration speed, these tools deliver real value.

The tradeoff is structural. When you spot a drop-off at step three of your core workflow, the platform's job ends there. What happens next involves a brief, a sprint, and a timeline that has nothing to do with the urgency of the insight.

For web and mobile app products alike, that gap between seeing the problem and shipping the fix is where activation bleeds out. If your mobile app onboarding is losing users at the permissions screen or the first-session empty state, a pure analytics platform will tell you exactly how many. Deploying a contextual response to that segment by Thursday requires a different system entirely.

This is not a criticism. It is a design choice. These platforms are built to answer questions at depth. If answering questions faster is your constraint, this archetype fits. If acting on answers faster is your constraint, you will need something alongside it.

Auto-capture platforms: Heap, Contentsquare, FullStory

The core promise here is retroactive analysis — every user interaction is captured from day one, no tagging plan required. For teams that cannot commit to an event taxonomy upfront, or who need to answer questions about behavior that predates their instrumentation, this is a genuine differentiator.

The limitation is signal-to-noise. Auto-capture generates a lot of data. Turning that data into a finding specific enough to act on still requires analytical resources most scaling teams do not have on demand. Identifying that your mobile app users are abandoning a flow in session two does not give you a mechanism to deploy an in-app fix to that segment without engineering. The insight is faster. The distance to action is the same.

If your primary problem is instrumentation gaps or retroactive investigation, this archetype solves it well. If your primary problem is iteration speed on known drop-offs, it does not.

Analytics plus in-app execution: Pendo, Jimo

This is the category that directly addresses the insight-to-action gap. Both platforms combine behavioral data with the ability to deploy in-product experiences from within the same system. You see the drop-off. You build the fix. You publish it to the affected segment. You measure the lift. No sprint dependency, no handoff to a separate tool.

The distinction between platforms in this category matters, particularly for product-led growth teams at Series A or B scale.

Pendo is a strong fit for enterprise product organizations. The analytics depth is mature, the in-app guidance toolkit is comprehensive, and the roadmapping layer gives large teams a shared source of truth. If you are running a sales-assisted motion with a CS-heavy account structure and have implementation resources available, Pendo is a credible choice. The considerations to model carefully: MAU-based pricing that escalates as your user base grows, an implementation cycle measured in weeks, and feature access that is tiered.

Jimo is built for a different profile. Scaling B2B SaaS teams where PMs and product ops need to ship and iterate without engineering dependency. The AI-powered onboarding layer adapts to user behavior over time rather than serving static flows, which matters both for web products and for or web-based SaaS products where session length varies and tolerance for irrelevant guidance is low.

The retention insights connect guidance performance directly to activation outcomes, so the conversation with leadership is grounded in cohort data rather than engagement proxies. If speed of iteration is a competitive requirement and engineering dependency is a real constraint, this is the fit to evaluate.

Neither platform is the wrong choice in absolute terms. The question is which profile matches your GTM motion, your team structure, and your current bottleneck.

Five questions worth bringing to every product analytics vendor call

Most vendor evaluations follow the same script. The AE runs the demo. You see the dashboard. Someone asks about integrations. Someone else asks about pricing. Three weeks later you are comparing feature matrices in a spreadsheet and the decision comes down to who had the better trial experience.

That process answers the wrong questions. It tells you what the platform can do in a controlled environment with clean data. It does not tell you what it will do for your team in six months when the analyst is stretched, the board wants answers, and activation still has not moved.

These five questions change that.

1. "Show me the path from a drop-off to a deployed fix — without engineering."

This is the question that separates platforms with a genuine execution layer from those using "actionable insights" as a marketing phrase. If the answer involves exporting data, opening a second tool, or filing a request with another team, you have your answer. For a closer look at what that end-to-end loop looks like in practice, the interactive onboarding strategies framework is worth reviewing before vendor calls.

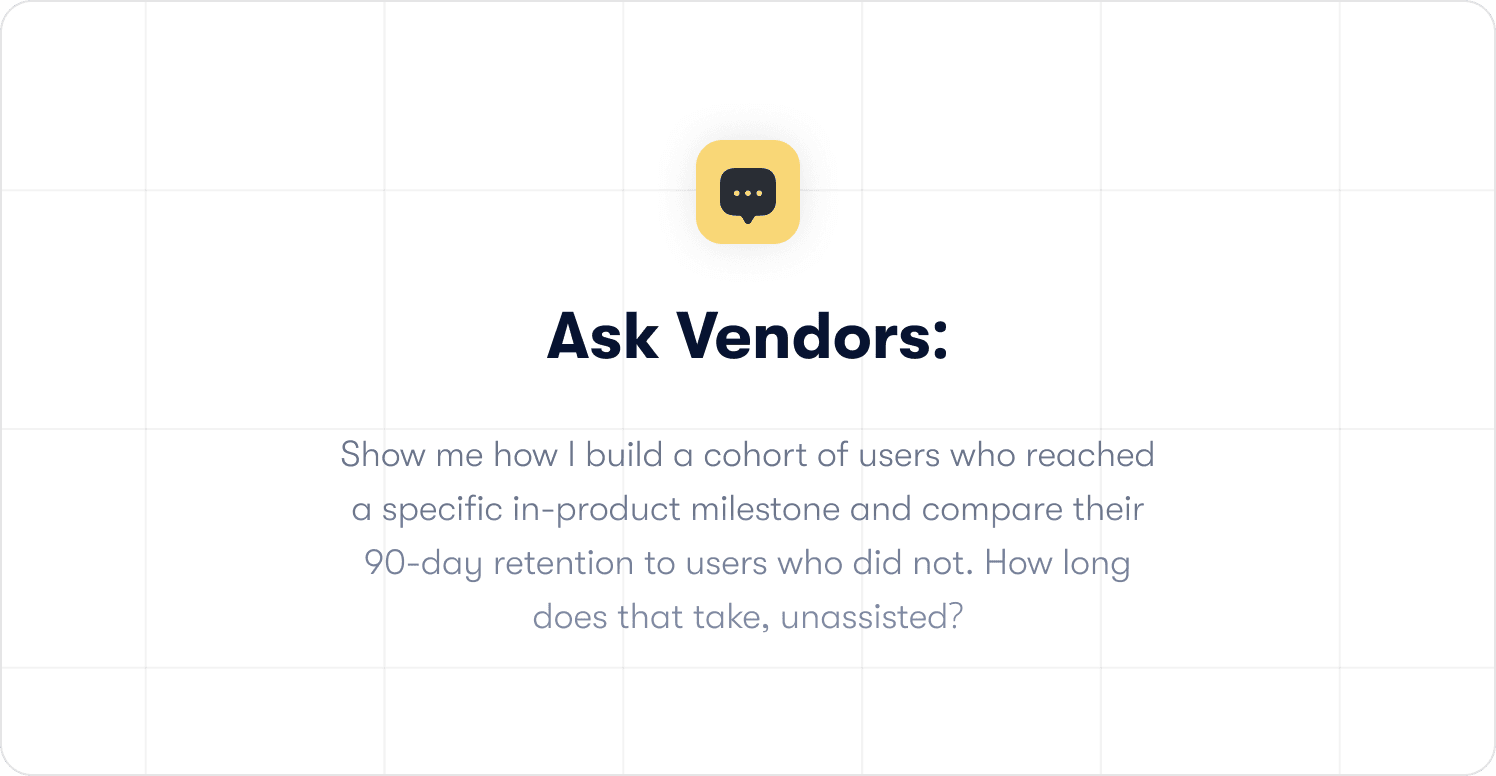

2. "Build me a retention cohort, right now, unscripted."

Ask them to pull a cohort of users who completed a specific in-product behavior and compare their 90-day retention to users who did not. Live. Without preparing it in advance. Unscripted demos reveal capability gaps that scripted ones never will. If it takes longer than five minutes and requires someone technical to drive, the self-serve claim does not hold.

3. "Walk me through how a PM uses this platform independently, day to day."

Most platforms are architected with a data analyst as the primary user and PMs as secondary consumers of reports. If your team does not have a dedicated analyst, self-serve usability for non-technical users matters more than ceiling capability. Ask to see the day-one experience for a PM, not a demo account managed by a data team.

4. "Show me our projected cost at three times current MAU in 18 months."

Ask which features get gated as you scale and what the implementation cost looks like at that tier. Then ask what happened to customers who hit those thresholds without budgeting for them. The number on the pricing page and the number on the renewal invoice are rarely the same conversation.

5. "What does your SOC 2 certification cover, and how does your data handling hold up in an enterprise security review?"

For US SaaS teams moving upmarket, your analytics vendor's compliance posture will appear in your customers' security questionnaires before you expect it. A platform that cannot produce a clean SOC 2 Type II report will slow your enterprise deals. Get the answer before it surfaces in a live opportunity.

How to Choose a Product Analytics Platform: A Decision Framework for SaaS Teams

There is no universally correct answer here. The right platform is the one that closes your specific gap, and not the one with the most features, the best brand recognition, or the loudest presence on G2.

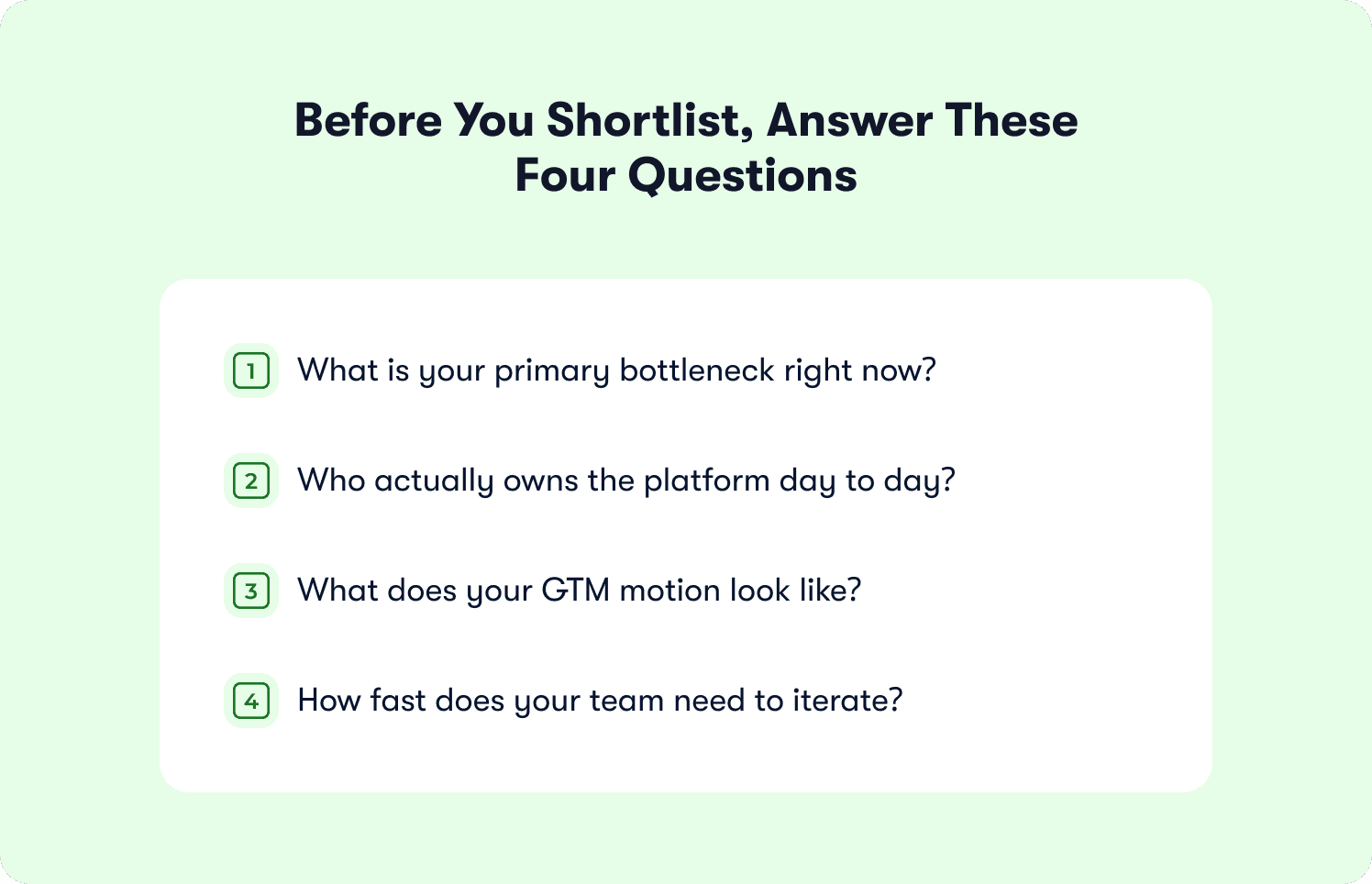

Before you shortlist, answer these four questions about your own situation. They will do more to narrow the field than any feature comparison.

What is your primary bottleneck right now?

If your team cannot get answers without involving a data analyst, the bottleneck is insight speed. Prioritize self-serve analytical depth and time-to-answer for non-technical users. Pure behavioral analytics platforms are your starting point.

If your team can see the problems clearly but cannot act on them without engineering, the bottleneck is execution speed. Prioritize platforms that close the loop between insight and in-product intervention. The analytics-plus-execution category is where to focus.

If your instrumentation is incomplete and you are making decisions on partial data, the bottleneck is data coverage. Auto-capture platforms solve this problem faster than any other archetype.

If data sovereignty or compliance is a hard constraint, not a preference, warehouse-native is the starting point regardless of the other factors.

Who actually owns the platform day to day?

If the answer is a data analyst or data engineer, analytical depth and query flexibility matter most. If the answer is a PM or product ops manager, self-serve usability and no-code execution matter more. Most platforms are optimized for one of these profiles. Very few serve both equally well. Know which one describes your team before you sit down for a demo.

What does your GTM motion look like?

PLG and hybrid teams acquiring users at volume need pricing models that do not penalize growth. MAU-based pricing is structurally misaligned with a PLG motion. Event-based or flat pricing is not. If your acquisition is primarily self-serve and your trial volume is growing, model the pricing trajectory before anything else. The behavior metrics your platform needs to surface are also meaningfully different depending on whether conversion happens in the product or in a sales call.

How fast does your team need to iterate?

If your current cycle from identifying a problem to shipping a fix is measured in weeks, and that timeline is acceptable, most platforms will serve you adequately. If that cycle is the reason your activation rate is not moving, the platform you choose needs to compress it. That requires in-product execution capability, no-code deployment, and measurement that closes the loop on the same day the fix goes live, not two weeks later.

Your situation | Primary focus | Archetype fit |

Cannot get answers without a data analyst | Self-serve analytical depth | Pure behavioral analytics |

Can see problems, cannot act without engineering | Insight-to-action execution layer | Analytics plus in-app execution |

Instrumentation gaps, decisions on partial data | Full data coverage from day one | Auto-capture platforms |

Data sovereignty or compliance is a hard requirement | First-party data control | Warehouse-native analytics |

PLG motion, pricing must scale with growth | Pricing model alignment | Analytics plus in-app execution |

PM-owned onboarding, no engineering dependency | No-code deployment and measurement | Analytics plus in-app execution |

The best analytics platform for your product is the one your team will actually use to make faster decisions and ship faster fixes. Everything else is a feature list.

Before you evaluate a single platform, it is worth getting clear on what you are actually trying to fix. Most activation problems are not analytics problems. They are execution problems. The 19 Tactics to Improve User Activation playbook covers exactly that. Built on data from 500+ SaaS products and Jimo's own analysis of 1,025 product tours, it maps every tactic to the drop-off point it addresses — so you can pick two or three that match your biggest gap and ship them this week.

📖 Before you pick a platform, make sure you're solving the right problem. 19 activation tactics SaaS teams use to hit their aha moment faster. Read Jimo's activation playbook.

The Right Platform Is the One That Closes Your Specific Gap

Here is the question most VPs of Product do not ask before signing: not which platform has the best analytics, but how long it takes their organization to do something with what the analytics surfaces.

Because that timeline, from insight to shipped fix, is not a product problem. It is an organizational one. And a new analytics platform will not change it unless the platform itself is designed to compress it.

Your activation rate is sitting where it is for a reason. The data exists somewhere in your current stack. The drop-off is visible. The cohort that exposed it churned or converted weeks ago while the fix was still being scoped. You do not have an information problem. You have a loop problem.

The right platform closes the loop that is actually broken in your team — not the one that looked broken in the demo. Figuring out which one that is, before you sign, is the only evaluation that matters.

If the loop you are trying to close is the distance between behavioral insight and in-product execution, that is specifically what Jimo is built for. A strategy call is the fastest way to see whether it fits your situation.

Book your strategy call with Jimo today.

FAQs

What is the most important factor when choosing a product analytics platform for SaaS?

The most important factor is whether the platform helps your team act on insights, not just surface them; specifically, it should shorten the time between identifying a user drop-off and deploying a fix, ideally within the same system and without heavy engineering involvement.

Why don’t traditional analytics platforms improve activation rates on their own?

Most traditional platforms focus on visibility—showing where users drop off—but lack execution capabilities, meaning teams still rely on separate workflows, engineering resources, and long timelines to implement fixes, which slows down iteration and limits impact on activation.

How can I tell if an analytics platform is truly self-serve for my team?

A platform is genuinely self-serve if non-technical users like PMs can independently build funnels, cohorts, and retention analyses in minutes without SQL, analysts, or setup delays, enabling faster decision-making and iteration cycles.

What is the difference between behavioral segmentation and basic segmentation?

Behavioral segmentation targets users based on specific in-product actions or sequences (e.g., actions completed or missed), while basic segmentation relies on static attributes like plan or role, making behavioral segmentation far more effective for timely, relevant interventions.

How should I evaluate pricing for analytics platforms as my SaaS grows?

You should model costs at 2–3x your current user base and assess how pricing scales with MAU or event volume, as well as which features become gated, since many platforms become significantly more expensive at scale and can misalign with product-led growth economics.